Google has just announced a game-changing feature for Google Search Console: the ability to export data directly to BigQuery. We wasted no time and activated the export feature three days ago, and in our initial analysis, we were able to uncover a wealth of additional URL and keyword data that was previously unavailable in the standard Search Console reports. With this new BigQuery export feature, you can now dig deeper into your website’s performance and gain insights that you may have missed before.

2330% more different keywords in BigQuery export

We analysed the data from two websites – one small and one large. We observed a significant difference in the count of different URLs and keywords for the large website, with in default 5.000 over the api and 160.515 different keywords in the BigQuery export.

We’ve compared only one day in order to have comparable data for a day by day export.

| Search Console Frontend export* | Search Console API* | BigQuery Export | |

|---|---|---|---|

| Count of different URLs: Small website | 119 | 119 | 119 |

| Count of different URLs: Large website | 1000 | 5000 | 19.212 |

| Count of different Keywords: Small website | 1000 | 1437 | 1437 |

| Count of different Keywords: Large Website | 1000 | 5000 | 160.515 |

| Count of different Keywords containig word X: Small website | 203 | 203 | 203 |

| Count of different Keywords containig word X: Large website | 13 | 13 | 661 |

As you can see in the table above, big sites get much more data from Google. But also small websites with more than 5k different keywords can benefit from the advantages with the export.

Especialy the fact that the count of unique keywords containing a special word is much higher for the large website shows that in GSC the amount of data what is saved is limited compared to the export.

*By filtering data, creating more properties etc. it is possible to get more rows, but it needs to be set up and you can’t ever be sure to get all the data out.

Many One impression keywords (on that day)

88% of the keywords for the large website had only one impression, and only 7% had one or more clicks that day. However, we expect to see more keywords with clicks and impressions over a more extended period.

Daily data export

It’s important to note that the data export process starts on the day of activation and begins exporting data day by day, with no backfill of the data.

Data fields in the exported data

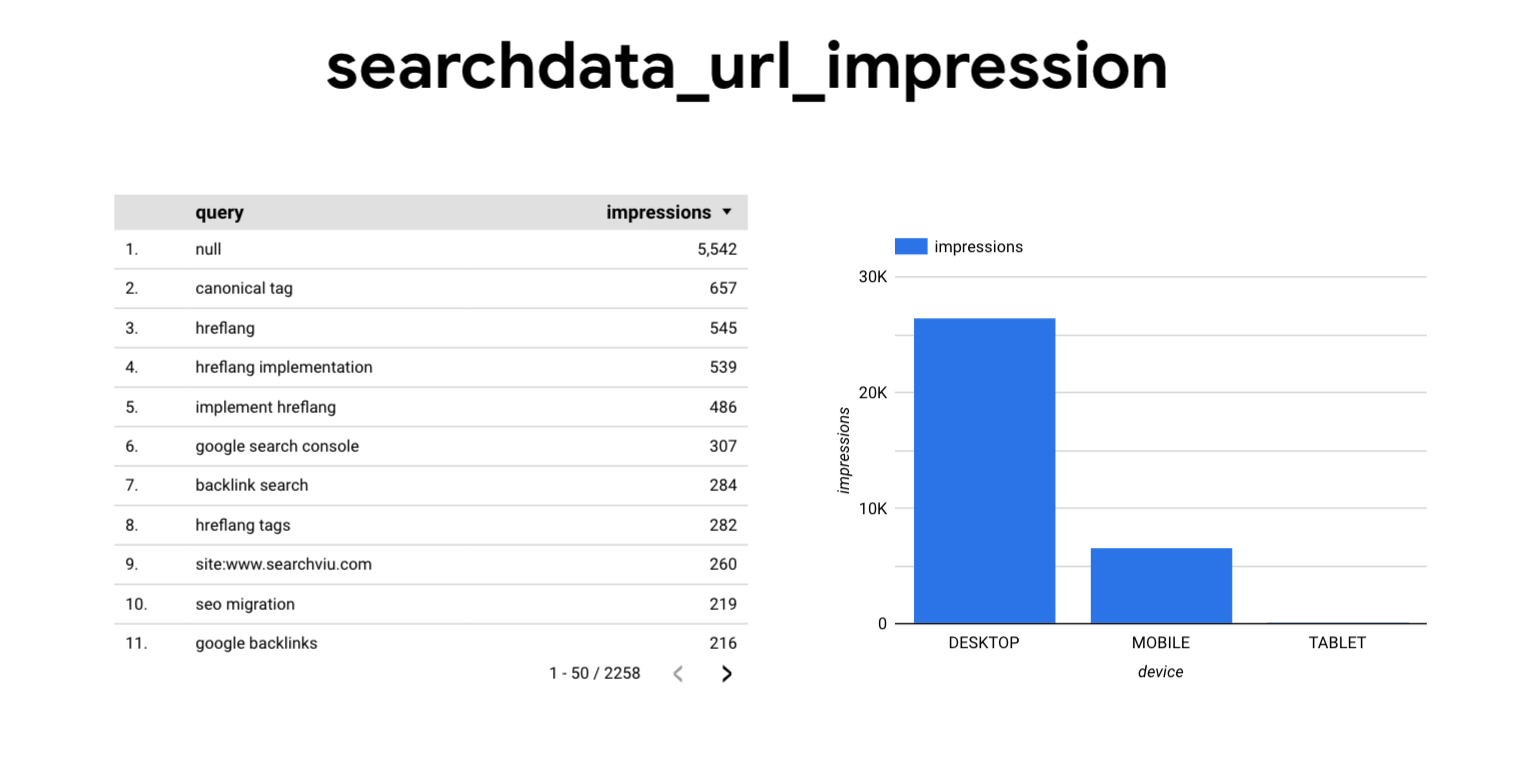

The exported data contains fields such as date, country, search type, etc., similar to the query fields in the Google Search Console, including all filters to filter by search appearance. The data tables are created in the BigQuery data set, and having the data in a table format combined with each other makes it easier to analyze the data with charts, pivot tables, etc.

You can find the complete table references here.

Getting started with exporting data to BigQuery

A few words before we begin: Before diving into exporting Google Search Console data through BigQuery, it is important to note that data storage and query costs in Google Cloud Platform can accumulate over time. To avoid unexpected charges, it is recommended to have a basic understanding of the Google Cloud Platform pricing model and to regularly review your usage and costs. It is also helpful to have a general overview of the Google Cloud Platform before starting.

For each property you export to BigQuery, you should think about creating a designated project in Google Cloud Platform. It’s not possible to change the assigned dataset name and exporting two GSC Properties into the same project, meaning writing data from these properties in the same table. It can make sense to grant the same access to both properties, but if you want more granular access control we suggest you create two projects.

With that in mind, let’s begin the step-by-step guide to exporting your Search Console data.

1.

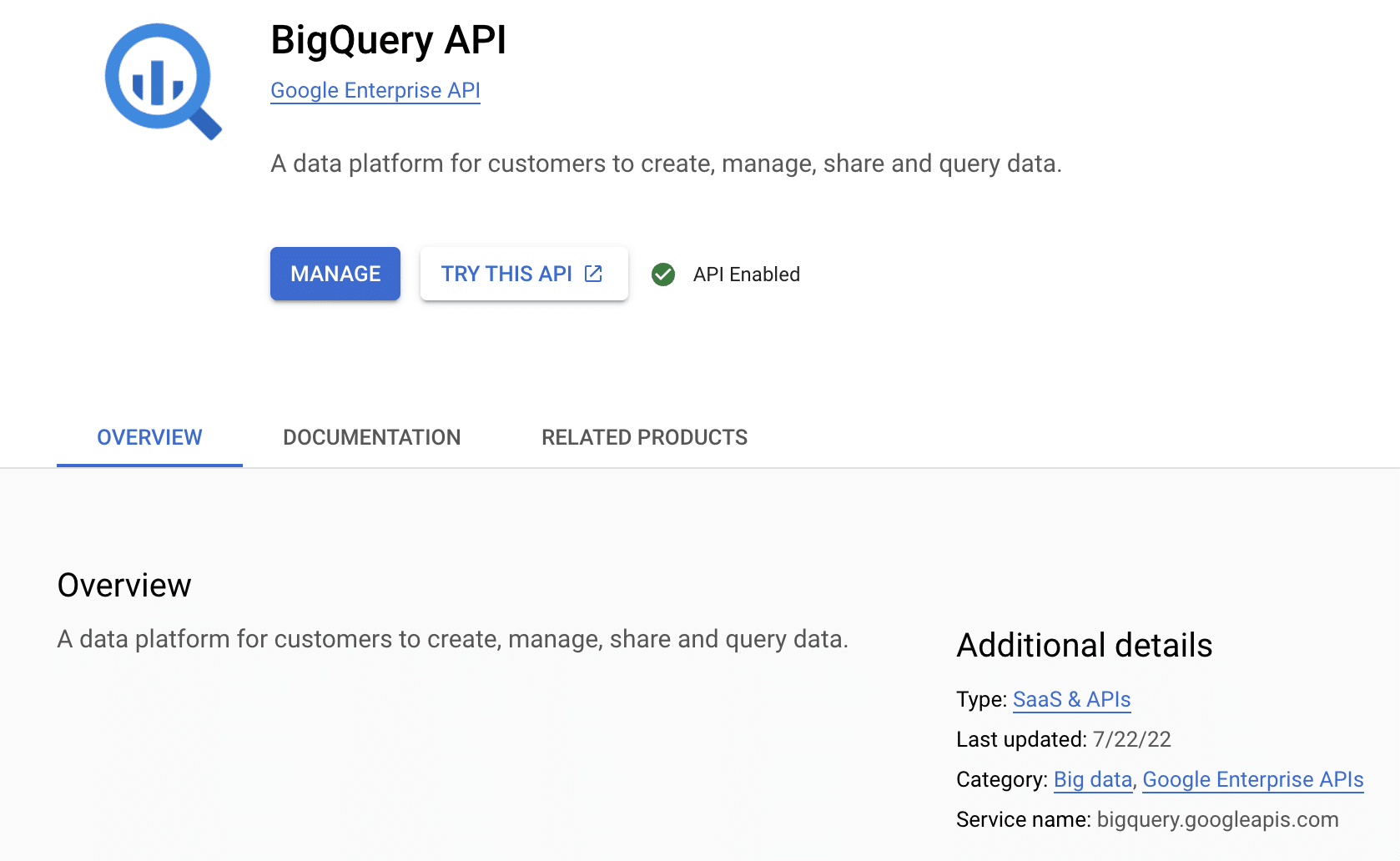

Enable BigQuery in your Google Cloud project

Navigate to APIs & Services > Enabled APIs & Services in the sidebar of Google Cloud Console. If BigQuery is not enabled, click + ENABLE APIS AND SERVICES to enable the BigQuery API. Or just click here.

2.

Grant Search Console access to your Google Cloud project

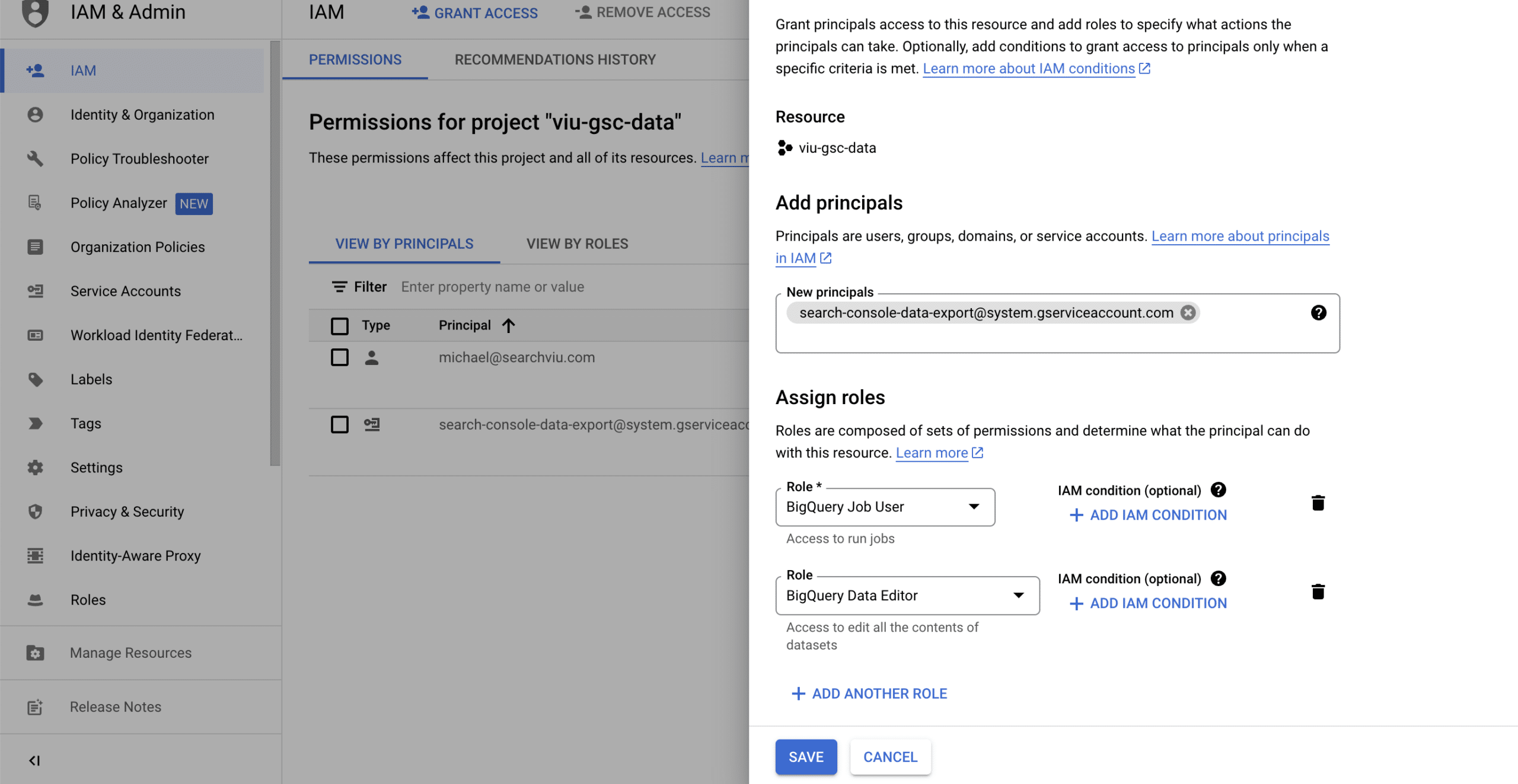

Navigate to IAM and Admin in the sidebar of Google Cloud Console. Or click here.

Click + GRANT ACCESS to open a side panel that says Add principals. In New Principals, paste the following service account name:

search-console-data-export@system.gserviceaccount.com

Grant it two roles: BigQuery Job User & BigQuery Data Editor

3.

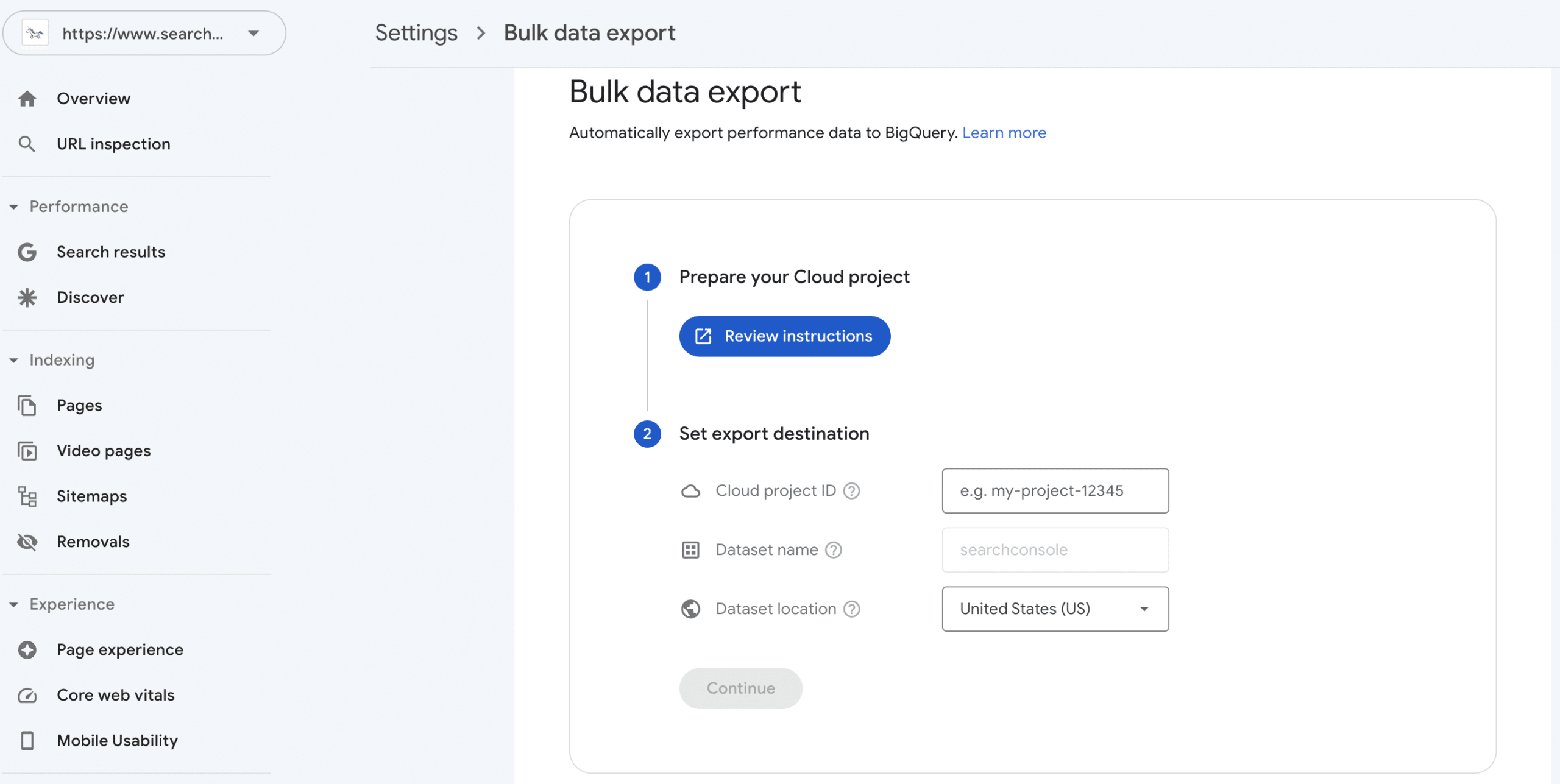

Set up your Google Cloud project in Search Console

Go to Settings > Bulk data export for your property in Search Console. Copy the project ID (the name of the Google Cloud project you’ve created) into the Cloud project ID field.

Select a location for your dataset from the list and click continue.

After that it takes a day or two until the first data arrives in BigQuery.

By following these steps, you can export Google Search Console data to BigQuery through daily bulk exports.

You can find more information about the setup of the export in these articles from Google:

https://support.google.com/webmasters/answer/12917675

https://developers.google.com/search/blog/2023/02/bulk-data-export

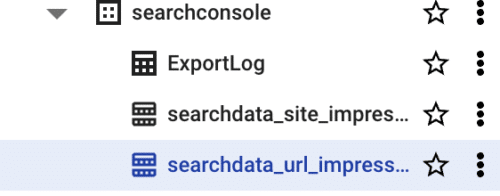

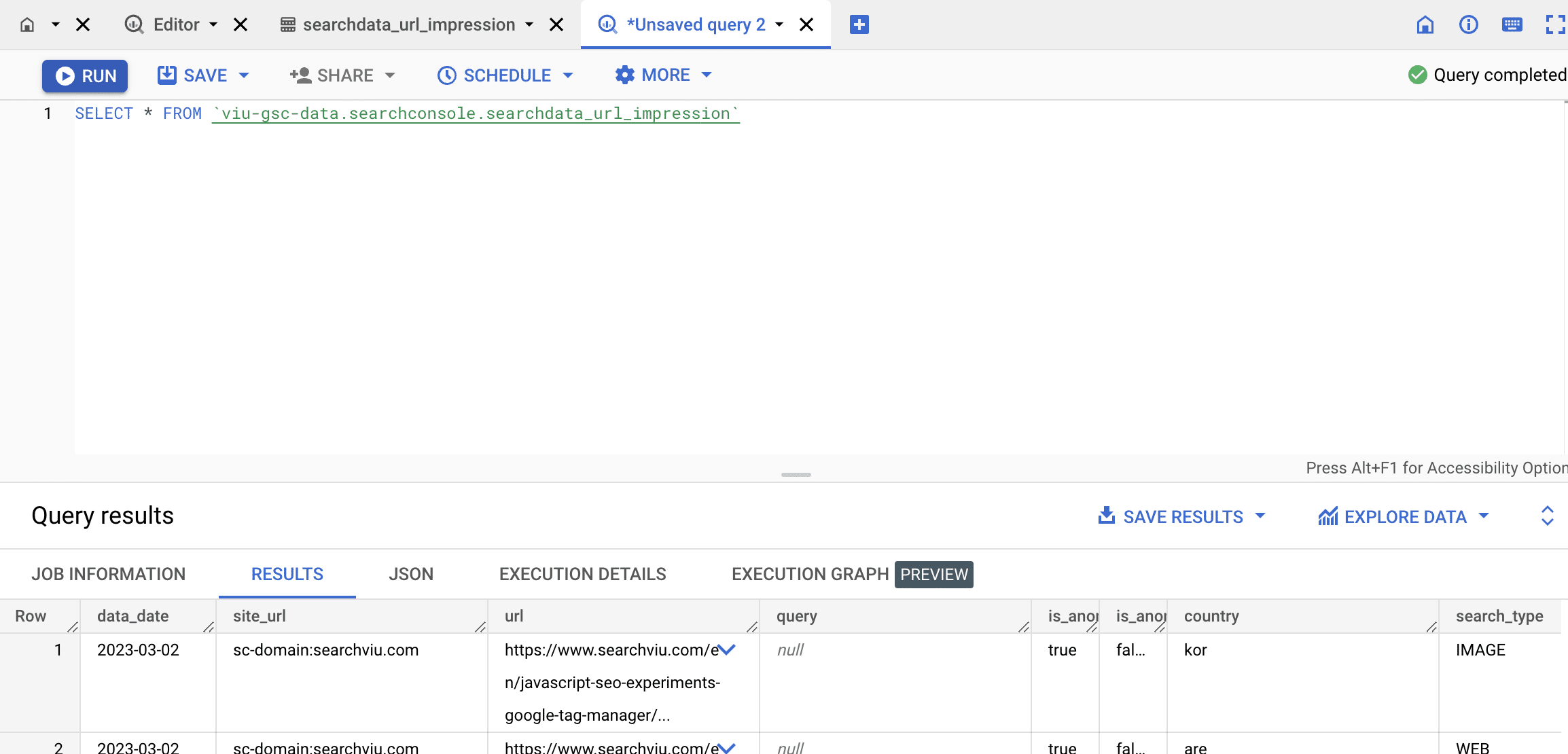

Accessing the data

After the first data has been imported to BigQuery you can create some SQL statements to explore the data. But it’s much easier to explore the data directly with Google Looker Studio.

To do so, navigate to Google Cloud Plattform -> BigQuery and select the dataset and a table.

Click on Query and change the query to:

SELECT * FROM `{yourproject}.searchconsole.searchdata_url_impression`

(this queries all columns from the table over the complete timeframe)

Run the query.

Click on Explore Data -> Explore with Looker Studio.

Here you can explore the data without writing any additional SQL Query.

Summary

The extended export of GSC data to BigQuery gives much more insights. But also a higher amount of data to manage and analyze. Because of the daily export we recommend to start soon – because you are losing data every day it is not activated.

If you’re interested in a Data Warehouse Service that takes care of all the export hassles, get in touch with us.