Has this happened to you? You check the “Crawl Errors” report in Google Search Console (formerly known as Webmaster Tools) and you see so many crawl errors that you don’t know where to start. Loads of 404s, 500s, “Soft 404s”, 400s, and many more… Here’s how I deal with big amounts of crawl errors.

Important note: This article is out of date, as it deals with error reports from the old Google Search Console, which no longer exist. Comments are closed.

This guide was first published on rebelytics.com in 2015 and has since then been updated several times and moved to this blog.

Contents

Here’s an overview of what you will find in this article:

- Don’t panic!

- First, mark all crawl errors as fixed

- Check your crawl errors report once a week

- The classic 404 crawl error

- 404 errors caused by faulty links from other websites

- 404 errors caused by faulty internal links or sitemap entries

- 404 errors caused by Google crawling JavaScript and messing it up 😉

- Mystery 404 errors

- What are “Soft 404” errors?

- What to do with 500 server errors?

- Other crawl errors: 400, 503, etc.

- List of all crawl errors I have encountered in “real life”

- Crawl error peak after a website migration

- Summary

So let’s get started. First of all:

Don’t panic!

Crawl errors are something you normally can’t avoid and they don’t necessarily have an immediate negative effect on your SEO performance. Nevertheless, they are a problem you should tackle. Having a low amount of crawl errors in Search Console is a positive signal for Google, as it reflects a good overall website health. Also, if the Google bot encounters less crawl errors on your page, users are less likely to see website and server errors.

First, mark all crawl errors as fixed

This may seem like a stupid piece of advice at first, but it will actually help you tackle your crawl errors in a more structured way. When you first look at your crawl errors report, you might see hundreds and thousands of crawl errors from way back when. It will be very hard for you to find your way through these long lists of errors.

Does this screenshot make you feel better? I bet you’re better off than this webmaster 😉

My approach is to mark everything as fixed and then start from scrap: Irrelevant crawl errors will not show up again and the ones that really need fixing will soon be back in your report. So, after you have cleaned up your report, here is how to proceed:

Check your crawl errors report once a week

Pick a fixed day every week and go to your crawl errors report. Now you will find a manageable amount of crawl errors. As they weren’t there the week before, you will know that they have recently been encountered by the Google bot. Here’s how to deal with what you find in your crawl errors report once a week:

The classic 404 crawl error

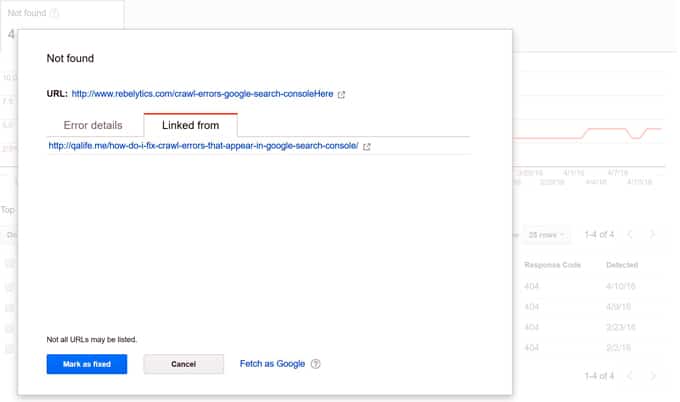

This is probably the most common crawl error across websites and also the easiest to fix. For every 404 error the Google bot encounters, Google lets you know where it is linked from: Another website, another URL on your website, or your sitemaps. Just click on a crawl error in the report and a lightbox like this will open:

Please note that the info in the “Linked from” tab is not always up-to-date. It can contain URLs that don’t exist anymore or that don’t link to the error URL anymore. This is because in this tab, Google lets us know where it found the error URL, not where it is currently linked (as the name might suggest).

Did you know that you can download a report with all of your crawl errors and where they are linked from? That way you don’t have to check every single crawl error manually. Check out this link to the Google API explorer. Most of the fields are already prefilled, so all you have to do is add your website URL (the exact URL of the Search Console property you are dealing with) and hit “Authorize and execute”. Let me know if you have any questions about this!

Now let’s see what you can do about different types of 404 errors.

404 errors caused by faulty links from other websites

If the false URL is linked to from another website, you should simply implement a 301 redirect from the false URL to a correct target. You might be able to reach out to the webmaster of the linking page to ask for an adjustment, but in most cases it will not be worth the effort.

404 errors caused by faulty internal links or sitemap entries

If the false URL that caused the 404 error for the Google bot is linked from one of your own pages or from a sitemap, you should fix the link or the sitemap entry. In this case it is also a good idea to 301 redirect the 404 URL to the correct destination to make it disappear from the Google index and pass on the link power it might have.

404 errors caused by Google crawling JavaScript and messing it up 😉

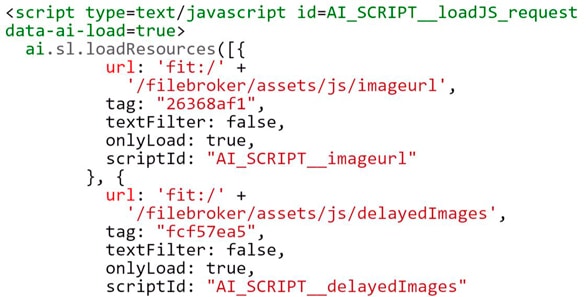

Sometimes you will run into weird 404 errors that, according to Google Search Console, several or all of your pages link to. When you search for the links in the source code, you will find they are actually relative URLs that are included in scripts like this one (just a random example I’ve seen in one of my Google Search Console properties):

According to Google, this is not a problem at all and this type of 404 error can just be ignored. Read paragraph 3) of this post by Google’s John Mueller for more information (and also the rest of it, as it is very helpful):

Mystery 404 errors

In some cases, the source of the link remains a mystery. The data that Google provides in the crawl error reports is not always 100% reliable. For example, the information in the “Linked from” tab is not always up-to-date and can contain URLs that haven’t existed for many years or don’t link to the error URLs anymore. In such cases, you can still set up a 301 redirect for the false URL.

Remember to always mark all 404 crawl errors that you have taken care of as fixed in your crawl error report. If there are 404 crawl errors that you don’t know what to do about, you can still mark them as fixed and collect them in a “mystery list”. Should they keep showing up again, you know you will have to dig deeper into the problem. If they don’t show up again, all the better.

If you have a case of mystery 404 errors, feel free to leave me a comment at the end of this article. I’ll be happy to check out your problem.

Let’s have a look at the strange species of “Soft 404 errors” now.

What are “Soft 404” errors?

This is something Google invented, isn’t it? At least I’ve never heard of “Soft 404” errors anywhere else. A “Soft 404” error is an empty page that the Google bot encountered that gave back a 200 status code.

So it’s basically a page that Google THINKS should be a 404 page, but that isn’t. In 2014, webmasters started getting “Soft 404” errors for some of their actual content pages. This is Google’s way of letting us know that we have “thin content” on our pages.

Dealing with “Soft 404” errors is just as straightforward as dealing with normal 404 errors:

- If the URL of the “Soft 404” error is not supposed to exist, 301 redirect it to an existing page. Also make sure that you fix the problem of non-existent URLs not giving back a proper 404 error code.

- If the URL of the “Soft 404” page is one of your actual content pages, this means that Google sees it as “thin content”. In this case, make sure that you add valuable content to your website.

After working through your “Soft 404” errors, remember to mark them all as fixed. Next, let’s have a look at the fierce species of 500 server errors.

What to do with 500 server errors?

500 server errors are probably the only type of crawl errors you should be slightly worried about. If the Google bot encounters server errors on your page regularly, this is a very strong signal for Google that something is wrong with your page and it will eventually result in worse rankings.

This type of crawl error can show up for various reasons. Sometimes it might be a certain subdomain, directory or file extension that causes your server to give back a 500 status code instead of a page. Your website developer will be able to fix this if you send him or her a list of recent 500 server errors from Google’s Webmaster Tools.

Sometimes 500 server errors show up in Google’s Search Console due to a temporary problem. The server might have been down for a while due to maintenance, overload, or force majeure. This is normally something you will be able to find out by checking your log files and speaking to your developer and website host. In a case like this you should try to make sure that such a problem doesn’t occur again in future.

Pay attention to the server errors that show up in your Google Webmaster Tools and try to limit their occurrence as much as possible. The Google bot should always be able to access your pages without any technical barriers.

Let’s have a look at some other crawl errors you might stumble upon in your Google Webmaster Tools.

Other crawl errors: 400, 503, etc.

We have dealt with the most important and common crawl errors in this article: 404, “Soft 404” and 500. Once in a while, you might find other types of crawl errors, like 400, 503, “Access denied”, “Faulty redirects” (for smartphones), and so on.

In many cases, Google provides some explanations and ideas on how to deal with the different types of errors.

In general, it is a good idea to deal with every type of crawl error you find and try to avoid it showing up again in future. The less crawl errors the Google bot encounters, the more Google trusts your site health. Pages that constantly cause crawl errors will be thought to also provide a poor user experience and will be ranked lower than healthy websites.

You will find more information about different types of crawl errors in the next part of this article:

List of all crawl errors I have encountered in “real life”

I thought it might be interesting to include a list of all of the types of crawl errors I have actually seen in Google Search Console properties I have worked on. I don’t have much info on all of them (except for the ones discussed above), but here we go:

Server error (500)

In this report, Google lists URLs that returned a 500 error when the Google bot attempted to crawl the page. See above for more details.

Soft 404

These are URLs that returned a 200 status code, but should be returning a 400 error, according to Google. I suggested some solutions to this above.

Access denied (403)

Here, Google lists all URLs that returned a 403 error when the Google bot attempted to crawl them. Make sure you don’t link to URLs that require authentication. You can ignore “Access denied” errors for pages that you have included in your robots.txt file because you don’t want Google to access them. It might be a good idea though to use nofollow links when you link to these pages, so that Google doesn’t attempt to crawl them again and again.

Not found (404 / 410)

“Not found” is the classic 404 error that has been discussed above. Read the comments for some interesting information about 404 and 410 errors.

Not followed (301)

The error “not followed” refers to URLs that redirect to another URL, but the redirect fails to work. Fix these redirects!

Other (400 / 405 / 406)

Here, Google groups everything it doesn’t have a name for: I have seen 400, 405 and 406 errors in this report and Google says it couldn’t crawl the URLs “due to an undetermined issue”. I suggest you treat these errors just like you would treat normal 404 errors.

Flash content (Smartphone)

This report simply lists pages with a lot of flash content that won’t work on most smartphones. Get rid of flash!

Blocked (Smartphone)

This error refers to pages that could be accessed by the Google bot, but were blocked for the mobile Google bot in your robots.txt file. Make sure you let all of Google’s bots access the content you want indexed!

Please let me know if you have any questions or additional information about the crawl errors listed above or other types of crawl errors.

Crawl error peak after a website migration

You can expect a peak in your crawl errors after a website migration. Even if you have done everything in your power to prepare your migration from an SEO perspective, it is very likely that the Google bot will encounter a big number of 404 errors after the relaunch.

If the number of crawl errors in your Google Webmaster Tools rises after a migration, there is no need to panic. Just follow the steps that have been explained above and try to fix as many crawl errors as possible in the weeks following the migration.

Summary

- Mark all crawl errors as fixed.

- Go back to your report once a week.

- Fix 404 errors by redirecting false URLs or changing your internal links and sitemap entries.

- Try to avoid server errors and ask your developer and server host for help.

- Deal with the other types of errors and use Google’s resources for help.

- Expect a peak in your crawl errors after a website migration.

Important note: This article is out of date, as it deals with error reports from the old Google Search Console, which no longer exist. Comments are closed.

Hi… Thanks for the information… This below is the mail I got from Google few days ago

“Search Console has identified that your site is affected by 2 new Coverage related issues. This means that Coverage may be negatively affected in Google Search results. We encourage you to fix these issues.

Top new issues found, ordered by number of affected pages:

Submitted URL not found (404)

Redirect error”

Please, what should I do???

Please note that this article deals with reports from the old Google Search Console, which no longer exist, and your questions are about errors from the new Google Search Console.

For the error “Submitted URL not found (404)”, you can follow the advice for 404 errors described above.

The redirect error normally means that a URL redirects, but the redirect does not lead to a working target. You can check if there is a redirect loop or a similar problem.

I hope this helps. Please let me know if you have any further questions.

Hi thanks for this helpful guide. Unfortunately I am still confused about the errors showing up on my google search console. I think It happened after I moved URLs back in December. I now have 31 errors that simply say – Submitted URL has crawl issue, many of the links are to media items uploaded to my media library, some never used or deleted since. Others on pages that seem fine. I pressed the validate all fixes back on 6th March and now at 20th April it still says pending. I am not really sure what to do but I know my google rating has gone down enormously. In December my old site was close to 250 hits from google a day no I get about 2. Really lost what to do now.

Hi Jade,

If you changed your URLs last year, you should make sure that all old URLs that ever existed on your website now redirect to URLs that work and that have the same or similar content as the old URLs.

Most of you crawl errors are probably nothing to worry about and if you lost most of your organic search traffic, there are probably other reasons for this, even if the crawl errors might be related to some them.

Please let me know if there is anything I can do to help you.

Hi! Google is unable to index our homepage url https://wineshoplouisville.com/ and is showing a Crawl Anomaly error in Search Console. When I inspect the page source in Chrome and click on Network tab, it shows a 3.19sec load with 404 errors on two elements (header_bg.gif and title_bg.png), and 500 error on http://www.wineshoplouisville.com document. It seems that Google has grabbed all the other urls in our site though. I am not a developer, nor am I well-versed in wordpress (we are using All in One SEO plugin). Could this be an issue with the homepage image carousel or another plugin? Or is it something else altogether? (A couple side notes: This site was built a while ago and is using php5.6. As far as I understand it cannot be updated to php7 without a considerable amount of work. We changed hosting providers recently and our version of wordpress was updated at that time, and an SSL installed. Hosting provider assures us redirects are set up properly and there are no server side issues.) Thanks in advance for any thoughts!

Hi Shelby,

I checked your home page and it does indeed give back a 500 error, although it is working perfectly. This status code is the problem you should take care of first – you can worry about the 404s on the image files later, as they are not the reason for your indexing problems.

Your home page should be giving back a 200 status code. Google probably won’t index a page as long as it gives back a server error, even if it renders perfectly in the browser.

I’m not sure what this problem is caused by, but it might be a good idea to deactivate your plugins one by one to check if one of them might be causing this.

I hope this helps for now!

Best regards,

Eoghan

Hi

I am working on wordpress. Elementor plugin and yoast seo are there. When i create a page, i simply add title. And URL is generated with that title. But after updating all SEO information, there is new URL. But search console is crawling with old URL and 404 error is displaying.

Example:

1. Before Updation SEO information URL: http://healthgeekss.com/health/services/weight-loss/wl-sideeffects/

2. After Updation SEO information URL: http://healthgeekss.com/health/services/weight-loss/side-effects-of-rapid-weight-loss/

Google is crawling by first. Instead of 2nd but on blog there is 2nd updated URL. How to overcome it.

Please help me.

Hi Shikha,

Thank you for your question.

A quick solution would be to set up a 301 redirect from the old URL to the new URL. This would at least fix the 404 error problem.

You can then also check if there are any internal links, sitemap entries or similar pointing to the old URL. If so, they should be updated.

It might be possible to fix the underlying problem by making sure you don’t publish articles or pages before you’ve defined the final URL.

I hope this helps for now. Please let me know if you have any further questions.

Best regards,

Eoghan

Hi Eoghan,

I am working on a website is showing JavaScript in the meta description in Bing/Yahoo. For some reason, the homepage of the website doesn’t the title or meta description in Google.

Happy to share the URL with you in private.

Thanks

Sam

Hi Sam,

I will send you an e-mail so that you can share more information with me.

Hi Eoghan,

Thanks for this article. I have a question around SPA (Single Page Applications). I am currently get a soft 404 as I’m using Angular on my site to show content dynamically. I’m assuming this is the reason why I’m getting this error and why search engines are not crawling my site. Is there a strategy to overcome this for SPAs?

Hi Will,

Unfortunately, there’s not a simple answer to your question, as SEO for SPAs / JS frameworks is a complex topic, but here are a few things you could start with:

– Make sure every page on your website has a URL of its own (without using URL fragments).

– Make sure Google can render each page on your website.

– Consider server-side rendering as an option.

The latest versions of Angular have several SEO-friendly solutions.

I hope this helps for now!

Hello Eghan Henn.

I deleted many posts, categorys, pages, tags in my wordpress.

Now I give 404 errors URLs in my webmaster, Crawl errors.

please tell me how to fix it step by step?

Hi Milad,

Sure, here are the steps:

1. Export 404 errors.

2. Find a matching redirect target for every one of them.

3. Set up redirects (only for URLs that have a matching target).

I hope this helps!

Hello Eoghan, thank you for your useful post.

I’m having an huge increase of 404 errors on my website.

Since a couple of days, Googlebot (smartphone) crawls about 10k/day fake URLs.

URLs are like:

– /ebook-murray-lawn-mower-download-pdf.pdf

– /kim-passion.pdf

– /leddy-may-other-poems.pdf

– /ebook-minutes-of-stated-meetings-download-pdf.pdf

and so on… I never had those files on my website.

The strange thing is that for the URLs above, Google does not report the link source.

For all the other “normal” 404 errors, Google report the link source, both if it’s internal (e.g. sitemap or site page) or external (external site page that links to mine).

I searched for the URLs in all the website files (also cache files), databases, etc. There aren’t matching in any way.

Is anyone experiencing the same behavior?

Hello Franco,

This looks nasty! When you do a site: search for your domain on Google, you can see that there are thousands of URLs (mainly PDFs) indexed that don’t belong there. They all return 404 errors and they all seem to have had random content at the time they were crawled.

I’ve seen similar cases in the past on websites that had been hacked. Did you have any issues of that type in the past?

Hi,

This blog looks great. What if I get redirected error when i try to fetch and render in search console. Can any one guide me? How to resolve this ?

Hi Akshay,

What does the error say exactly? If it simply states that the URL is redirected, you should do fetch and render for the redirect target. If it’s a redirect from http to https, for instance, you might have to switch properties. If there’s an actual problem with the redirect (like a redirect loop), then you might want to fix that first.

Feel free to share more details about the error and I will be happy to help.

Hi Eoghan,

I have a similar problem that most of the people are commenting here..i.e 404 crawl errors in GWT…kindly check this snap https://prnt.sc/lxrgd8..this is a snap from GWT with all the errors in it….

i have tried for a couple of months to fix them by making ‘mark as fixed’ option…but these crawl errors gets generated almost every day…i have no idea where from these URLs are getting generated….

I have disabled almost all plugins…so that no problems arise out of plugins…

Moreover there is no point of making 301 redirects as these URLs are not from any contents from site and i will not be able to stop doing 301 redirects as the URLs gets generated every day…

No ‘LINKED FROM’ tab found in GWT…

what to do? Where from these URLs are fetching…ran my site for checking malware, no negative result found… can u plz help????

kindly come up with an answer asap…its troubling me a lot..

Thanks.

Brett Ander

Hi Brett,

This does indeed look strange. One thing you should be aware if is that by marking errors as fixed, you don’t actually fix them. You just make the URLs disappear from the report until they are crawled again.

This does look like some kind of malware problem to me. If you like, you can send me the URL of your website via e-mail and I will have a closer look.

Hi Eoghan,

Have you ever seen smartphone crawl errors like this one below?

size/x-large/page/2/%3Ca%20href=

I have hundreds of links with %3Ca%20href= appended to regular links.

Hi Nicolina,

Thank you for your question. “%3Ca%20href=” is “<a href=”, percent encoded.

This looks like a problem with broken internal links to me. There’s probably an error in one of the links in your page template that appends “<a href=” to the URLs of internal links or that simply links to “<a href=” as a relative link.

You can find this error by inspecting the source code of your page or by crawling your website with a tool like Screaming Frog. You can also send me the URL of your website via e-mail and I’ll have a look at it, if you like.

Hi Eoghan,

Very nice article. You have covered almost every possible scenario, except than what I am facing now 🙁

I see an strange issue in some of the websites who for some reason want to crawl my site and index it, specially Google Webmasters and Bing Webmasters.

The issue is that they are unable to show the screenshot of my website as their thumbnail !! (but it’s ok on some services like gtmetrix)

Here is the website: https://profile.center/

We have checked everything inside our codes, and found nothing…

Can you help me understand the reason?

Best,

Shahram

Hi Shahram,

Thank you very much for your comment. Could you please send me some additional information to help me understand your question better? Which thumbnail images are you referring to?

Hi Eoghan Henn,

I read your full article. Its really informative & well explained. My site has thousands of 404 errors in webmasters tools. The problem is that there is no linked page details. All the 404 errored paged were double encoded . For example , I have urlencoded the paged with “/” symbol to %2F in url parameter. But google showing 404 pages with “%252F” (google again urlencoded it % symbol to %25) . How to deal this type of errors. Any idea ? my site address is https://sapstack.com and the problamtic pages came from the section https://sapstack.com/tables

Hi Syam,

Sorry to hear about your URL encoding problem. I had a look at the URLs from your /tables/ directory that are currently indexed by Google and I couldn’t find any examples of error URLs, so that’s a good sign. Can you tell from the crawl error reports when this type of error was first detected? Maybe there was a temporary problem with your URL encoding that caused the errors. If Google is still re-crawling the false URLs you might be able to set up redirect rules to send the bot back to the correct version of each URL.

If you send me some more detailed information via email, I’ll be happy to have a closer look.

plz help me how can i fix this 🙁

URL Last Crawled

01 Sep 2018

Last Crawl Result

Banned Domain Or IP

Hi John,

This is an error message from Majestic, and not from Google Search Console, right? I’ll be happy to help you if you send me some more information.

Why will irrelevant crawl errors not show up again if I mark everything as fixed? I think it will be better if I can determine all critical errors right the first time and fix them immediately. A week may be too long to take action.

Hi Lina,

Yes, you’re right! If you’re able to analyse all of the errors straight away, then that’s the best thing to do. But if there’s a lot of data and you want to focus on the most important errors first, then marking everything as fixed is a good idea, because it allows you to start from zero and only address issues that were recently encountered by Google’s bots (the ones that show up again).

Hello Eoghan,

Thanks for the article, it’s really helpful, while we try to figure out what is happening on our site. We identified thousands of low quality posts on our WordPress site and deleted them from the site. We’ve updated any internal links pointing to those pages, so they are no longer being linked too. But since these changes, we’re still seeing within Google Search Console that our Not found errors has been increasing dramatically. We 301 redirected a large portion of these URL’s, but some we just left as a 404 as Google has mentioned in many instances this shouldn’t be a problem. Where I am concerned is that these URL’s are still showing up in the not found error reports. Also, they are showing ‘linked from’ URL’s that either no longer exist or they do exist, but there isn’t a URL to the error page. It feels like Google is crawling a cached version of our site or has a snapshot from 2-3 months ago, rather than showing the actual crawl errors today. We can’t seem to find where/how Google is displaying these URL’s. Any suggestions?

Thank you,

BJ

Hi BJ,

What you’re seeing is normal behaviour by Google. Known URLs will be re-crawled regularly, even if they no longer have internal links pointing to them and if they return a 404 error. The “linked from” tab in GSC shows where Google discovered a URL, but the links are normally not removed from the report when they stop existing.

The only way to stop Google from re-crawling your old URLs is redirecting them to a matching target. If you manage to identify a good redirect target for all of the old URLs you eliminated, that’s what I would recommend to do.

If it’s not possible to redirect all of the old URLs, you can also consider changing the status code of the removed URLs from 404 to 410. A 410 status code signals that the content was removed intentionally and Google will most likely re-crawl those URLs less frequently in future and eventually stop re-crawling them less frequently.

I hope this helps! Please let me know if you have any further questions.

Best regards,

Eoghan

Hi Eoghan,

This is a very helpful article. We use to get multiple 404 errors due to javascript and kept scratching our head to figure out what going on with the website and than we would request for a manual crawl, but now as you said such errors can be neglected, we are at great relieve. Will keep visiting this page if such error again show up. Thanks for this post.

If these errors show up again and again, or if there are lots of them, it’s worth checking where they’re coming from and fixing them. If there’s just an occasional error of this type, it’s probably not going to do any harm.

Hi Eoghan,

Great article > thank-you

Recently I was caught with a Yoast update issue causing Google and the other major SE to index every image as its specific unique URL page. I installed the Yoast Purge plugin.

But I am still getting URL 404 crawl errors now months later.

Will this clear sooner or later or do I need to take some other manual actions.

Thanks Darren

Hi Darren,

Thank you for your comment. I’m glad you liked the article.

The Yoast Purge plugin sets a 410 status code (instead of 404) for all of the attachment URLs. You can check your error reports to see whether the URLs have 404 or 410 errors. You can also double check the status codes with a tool like https://httpstatus.io. If they are 410 errors, everything’s fine. You’ll just need some patience until they all disappear. If you’re still seeing 404 errors, then either something isn’t working with the Purge plugin, or the errors were caused by a different problem.

I hope this helps for now. If you have any further questions, please just let me know.

Hi Eoghan,

I see some comments above about the Yoast plug-in, but nothing specific to the issue I’m having. I’m finding that the Yoast tags are coming up as soft 404 errors in Google Search Console. Do you know how I can continue to use Yoast without these errors?

Thanks!

Hi CL,

Nice website, I really like the design.

Tags aren’t a Yoast feature, they’re a standard WP feature, and it looks like you’ve deactivated some of them at some stage in the past (good idea). Some of your old tag URLs are now giving back 404 errors. On the 404 error page, there are links to other tags (at the bottom), which give back 200 status codes, but don’t have any content. These are the ones that show up as soft 404s in your crawl error reports.

Here’s what I would do: Deactivate tags altogether in WordPress, remove the links from the 404 error page, and make sure that all tag URLs give back 404 errors.

I hope this helps for now! Please let me know if you have any further questions.

Hey Henn, i’d appreciate if you can provide some insights or help

I am using pressive theme from thrive theme and thrive architect

My problem is in the google console as i have thousands of internal links, while i have 2 or 3 interlinking for each post and i have 50 posts

Thousands of these take this format http://prntscr.com/ktqz53

each post have 100s getting to each of them http://prntscr.com/ktqzpw

all my images are added via html and my xml sitemap has just pages and post

how can i clean up this stuff, my rankings have been dropping and i am thinking this maybe one of the causes

Hi Sammy,

When seeing the screenshots of your error URLs, I’m wondering if your problem is related to the Yoast image attachment bug from earlier this year. Please check this post on the Yoast website and let me know if it helps. If it doesn’t help, I’ll be happy to have another look at your problem.

Hi There,

I have around 8000 pages in my website. It is having around 22000 Not found URL. But when I am checking their source i.e. our internal link, the not found URL is not in that page anywhere.

Possible Reason of there errors are that I assumed:

1. I have replaced my topics taxonomy with tags

2. I have changed my “articles” taxonomy with “Category” taxonomy

3. Sometime I need to change my URLs due to various reasons immediately but my website is frequently crawled, it get cached immediately.. So that URL also comes Under “Not found” category

4. I am assuming these issue arises after the “Core Algo update” of Google On 18th April 2018 which suggest that you cannot change the URL. Is there any fix for the same if this is the only reason?

Some workaround that I have already performed

1. Removed URLs from Webmaster “Remove URL ” option

2. Tried to redirecting the above URLs on the current URL, but the website encounter so many issues, so removed the redirection

Can you please suggest any solution for the same

Hi Rajat,

When you change URLs, you should always 301 redirect the old URLs to the new ones. This might result in lots of redirects in your case, but it is definitely worth the effect.

In order to clean up the existing 404 errors, I recommend you redirect the error URLs to their new equivalents. In future, make sure that you always set up 301 redirects for URL changes immediately.

Also, maybe you can find solutions for not having to change URLs so much in future. URLs should only be changed when it’s absolutely necessary.

I hope this helps!

Hi Eoghan! Very very nice post!

I have a question, can you help me? WebMaster tools is showing me 404 errors, however it shows cut URLs. Sometimes missing a big chunk, sometimes missing only a hunk of html.

Example:

/corujinha-menina-lembrancinhas-e-imagens.h

/Centro-de-Mesa-Mario-Kart-Gr

Correct should be:

/corujinha-menina-lembrancinhas-e-imagens.html

/Centro-de-Mesa-Mario-Kart-Gratis.html

Hi Pedro,

Thanks for your comment. I’m glad you liked the article.

It looks like, for some reason, Google is trying to access these cut-off URLs on your page. The error URLs that are shown in the report are the URLs that Google actually tried to crawl.

Can you see where these URLs are linked from in the “linked from” tab? And do you have lots of error URLs of this type, or just a couple?

I hope this helps. If you have additional questions, please just let me know.

Hello, I am really hoping you can help me. I have a really bad situation with thousands of mystery 404’s showing up every day apparently from a googlebot searching my site. The site is positivemindworks.co . The only way I am able to keep my site active long term is by blocking the whole of the US from accessing it (with wordfence country blocker). Obviously having serious consequences for me SEO! If I lift the block for a day then googlebots try to crawl my site but keep crawling random dynamic mystery 404 pages that make no sense to me at all. After a few days it crashes my server. If you are in the US then I can lift the country ban for the day so that you can check out the site. Just please let me know when you are ready to take a look and I will lift it straight away. Thank you so much in advance!!!

Hi Samantha,

Thank you for your comment. I’m based in Spain, so there’s no problem for me checking your website.

It is indeed not a good idea to block all US IP addresses from your website. You really want Google to be able to crawl your website.

When I check which pages on your domain are currently indexed by Google, I see the type of URLs you are talking about: site: search for positivemindworks.co

Have you always owned this domain or did you acquire it from someone else? Was your website hacked recently?

Your server should normally not crash because of Google’s requests. You might have to think about upgrading your server. Also, you can try to limit Google’s crawl rate in Google Search Console under Site Settings > Crawl rate.

It is important that you find a solution for lifting the country block without Google’s requests crashing your servers first. Then, you should try to find out why these error URLs are being generated.

Apart from the solutions outlined in the article and in other comments, it might be a good idea to block the URLs in questions via the robots.txt file.

Please let me know if you need help with any of this.

My site http://www.jhollowell.com site returns a 403 to scanning tools/ googlebot. It is currently indexed on. Bing and Yahoo. kindly assist with these please.

Hi Ola,

Currently, I can’t access your website and I’m getting this error message:

Bandwidth Limit Exceeded

The server is temporarily unable to service your request due to the site owner reaching his/her bandwidth limit. Please try again later.

It looks like you have some server problems that need sorting out. Let me know when you’ve fixed it, so I can have a look to see if the 403 error for Googlebot is related to this or if there’s another problem.

Hi, I am seeing this in my Google Search Console: Googlebot couldn’t access this page because the server didn’t understand the syntax of Googlebot’s request.

This is a new site that was just set up within the last 2 months. It is WordPress hosted at GoDaddy with Cloudflare https.

Thanks so much for your help and dedication.

Hi Lori,

Thank you for your message. Which URL did you get this error message for? If you send it to me here or via e-mail, I’ll be happy to have a look at it.

Hello, sir, I have an error URL not found but I don’t know how to deal with such URL error because this the URL is like this: https://www.todaystechlog.com/category/pc/post-type-1 OR category/pc/post-type-1. So I don’t have any such URL on my site. Don’t know how such URL is created on my site I already set my permalink to sample post. So I won’t have any such kind of URL on my site. So how do I fix this kind of URL error? am using WordPress.

Hi Akshay,

It might be a URL that existed at some point in the past. If there are just a few errors like this, you can simply ignore them, or redirect the URLs to a matching target.

If there’s a bigger underlying problem and Google keeps finding new URLs, feel free to send me more information and I’ll be happy to have a look at it.

Hi,

Thank you for your article. We are a job listing website. Vacancies on our website expires after some time, so robot finds a lot of “not found” pages due to natural expiration. In our case, how should we handle it?

Hi Sutirth,

Thank you for your interesting question. Without knowing the details of your case, I would suggest giving back a 410 error code for expired vacancies. The URLs will stay show up in your error reports, but Google will understand that the content has been removed intentionally and might re-crawl the URLs less frequently in future.

Another option would be setting up 310 redirects, but I would only recommend this in cases where you have matching redirect targets, such as jobs that are very similar to the ones on the old URLs, which might be a bit difficult to set up in practice.

I hope this helps. Please let me know if you have any additional questions.

Best regards,

Eoghan

Hi, I got same error like top comment but can’t fix.

I have many 404 error url (deleted post)

https://ex.com/post_error_1 => It’s linked from https://ex.com/postx, /posty, /postz

https://ex.com/post_error_2 => same…

https://ex.com/post_error_3

https://ex.com/post_error_4

All page linked from (postx, postx, postz…) I checked and no more link to post_error_1 (or post_error_2…) but I don’t know why google still show linked (seem google not update).

on my site, all 404 error I hold old url and return 404 code (not found) – not soft 404.

so should I redirect 301 all error_post link to https://ex.com/404 (with 404 code return) or hold same old url https://ex.com/post_error_1 with 404 return code.

thank you

Thank you very much for your comment.

You’re right, Google doesn’t normally update the “linked from” information. It shows you were Google’s crawler originally found the URL, so it can happen that the pages shown don’t exist any more.

It is also normal that Google keeps crawling 404 pages to check whether the content has come back. If you like, you can just leave everything as it is and ignore the errors. If there are matching redirect targets for the 404 error URLs, it makes sense to redirect them. This way they will disappear from your error reports in GSC and Google will probably also stop re-crawling them eventually. Another option would be to give back a 410 error, instead of a 404, which is a stronger signal that the content has been removed intentionally and it can lead to Google re-crawling the URLs less frequently.

I hope this helps for now! Please let me know if you have any further questions.

Hi I am also getting same errors what George is getting like

https://www.ticklishblinks.in/fullscreen-page/comp-j7ephedi/2fd25b44-9622-11e7-bb53-12dd26dd586a/18/

I have lots of them. Please help!!

Hi Yogesh,

This seems to be a problem that occurs with lots of pages that are built with Wix.

I haven’t had much time to dig into this, but blocking the directory /fullscreen-page/ via your robots.txt file might help.

Feel free to let me know if any other questions occur while you do your further research on this.

I hope this helps for now!

Hello Eoghan,

Thanks a ton, as your redirection method worked like a charm for me, I was having an issue of 404 error pages, as you said we can find these issues in crawl errors, where search console provides a tab named “Linked from”, this linked from tab was containing my old pages of same website which don’t exist now and these URLs were from http://infusionarc.com and they were indexed but now my URL is https://infusionarc.com. So at first I resubmitted sitemap from my old domain i.e. from “http” and did redirection method on new domain i.e. “https”, and again resubmitted from “https”. Now there are 0 errors showing in search console.

Thanks.

That’s great! Thanks for sharing these details. Good work!

Hello sir,

Can you please help me, I am facing lots of 404 URL generated in webmaster.

Please check image there are all most 25k+ 404 URL generated in webmaster, I have also contact with server support, also scan website they didn’t find anything in my WordPress website.

I don’t’ why “info” folder created automatically. I have checked all source code.

Please help me to fix this issue.

https://prnt.sc/kcrvz9

Thanks,

Hi! Do you have information on where the URLs are linked from? You can find this by clicking on each URL and looking at the “linked from” tab. Without any additional information, it’s difficult for me to help you with this, but feel free to get in touch with more information, so that I can have a closer look at it.

Hi Eoghan,

it’s been a few months since I have a big problem of 404 errors that I can not solve.

I’ll explain shortly.

Apparently there was an error with the SSL certificate, confirmed by the Hosting provider. Because of this Google has linked links to another site, which resides on the same server and has the same shared IP.

This has caused over 3000 fake links linked to our domain.

The serp is completely invaded by these links with a lot of title and metadescription, clicking on them you get to page 404 of our site. By examining the individual links from the serp, google’s cache shows the pages with their original appearance and their original link.

The site is in no way corrupted or malware.

The provider confirms that the problem with SSL has been resolved.

I immediately reported the 404 errors as correct by the search console, but these come back again, I report them almost every day, if I do not, they multiply every day.

Although these links have never been present on our site.

I have also sent the sitemap several times, but it does not change anything.

I hope I was clear.

Do you have some advice?

Thank you

Hi Woody,

Simply marking the errors as fixed will not fix them, it will only remove them from the reports. If you want Google to stop crawling these URLs, I would recommend (in this case) to redirect them. As they never had any content, you probably won’t find a matching target on your website, so you can just 301 redirect them to your home page. This is not a very clean solution (redirecting tons of URLs to the home page is not something I would normally recommend), but it’s the only thing I can think of in an edge case like this.

I hope this helps! Please let me know if you have any additional questions.

Hello Eoghan,

Thank you for your answer.

Would not it be better to report them then as 410 errors?

Perhaps in this way we could even clean up the SERP of our site.

Error 410 indicates deleted contents, so maybe it’s a cleaner solution than 301 do not you think?

Thanks

Its Nice Explanation actually i am facing Soft 404 Errors in my web master tool . i Just started new blog but 404 error is coming. so should i ignore this type of error?

Please help

https://newswoxen.com/ This is website address

You probably shouldn’t ignore soft 404 errors! I’d recommend checking the error pages and figuring out why Google is seeing them as soft 404s (info above: https://www.rebelytics.com/crawl-errors-google-search-console/#what-are-soft-404-errors). I hope this helps! If not, please just let me know.

Hi,

i am using wordpress multisite platform.

please help me regarding 404 not found page error. why i am getting 404 error everyday . i already fixed 300-400 errors but still getting more new 404 not found page error, is there any way to overcome from this kind of problem.

you can check the following

https://prnt.sc/k9505f

https://prnt.sc/k951jw

please help me

Hi Raman,

Sorry, I can’t access your screenshots right now. It says “Lightshot is over capacity”. If you like, you can send me some more info via e-mail and I’ll have a look.

Hello Sir, I`ve found something strange on my website

Just in 2 days it have 400 ++ Url Erros (not found) like you can see here : http://prntscr.com/k36tc0

And all the errors are the same, can see here : http://prntscr.com/k36ts4

Why would Google bot crawl until the page so far far away? I really dont get it why.

Do you have a solution for my problem sir?

It looks like you’ve got something strange with pagination going on there. I quickly checked your indexed URLs and it looks like most of them end with /page/-number-/. If some of these URLs stop existing, you should make sure to redirect them to a matching target, so that they don’t throw 404 errors.

I hope this helps!

Hi Sir,

I am trying to crawl my website https://wdsoft.in/ in webmaster tool, but it’s not crawling successfully, it’s continuously shown redirected, please help me how to fetch my website as google fectch.

I hope you help me.

Thanks

Hi Arun,

at a first glance, I can’t detect any important problems. Are you using the right property? If you’re in the http or www property, although your page is on https and without www (and http and www URLs redirect to the correct versions), all URLs you fetch will show as redirected.

I hope this helps! If you have additional questions, please just let me know.

Hi Eoghan Henn !

My Name is Samia and I’m a blogger. I’m facing an issue named “Server Error”. I’m using Newspaper 8 Theme and the Error is ” /wp-content/themes/Newspaper/” can ou please guide me how to Fix it ?

Hi Samia!

Thank you very much for your comment. You shouldn’t worry too much about this error. Maybe you can find out how Google found this URL? This discussion might help: https://www.rebelytics.com/crawl-errors-google-search-console/#comment-11988

Please let me know if there’s anything else I can do for you.

Hi,

I bought a new domain through Shopify and set up the store. Suddenly some strange links started to appear in search console like 404 errors. Those links are from a site which had the same name, and was previously owned by someone else and when I looked up in Wayback machine this website was active till 2017 and it was some sort of world news website. My shop is related to fashion and I am getting links like world news, archive567, tag/beyonce, car accidents, tag.christians, tag/campus etc. There is no way to redirect them. I have deleted those links like they were fixed but they reappear again and again. I don’t want to start a brand new website with some spamy links and warnings from Google. Please tell me how to deal with it and how they can sell domain which is not completely clean.

Thank you

Hi Lana,

Thank you for sharing this interesting case. What’s happening here is totally normal – Google still knows the old URLs and tries to re-crawl them once in a while, in order to see if the old content has come back. This will probably never stop, unless if you set up 301 redirects from those old URLs to new targets that actually work. Another option that might work would be returning a 410 code instead of a 404 code. This is a signal to Google that the content has been removed intentionally and they might re-crawl the URLs less often or stop crawling them altogether. If Shopify itself doesn’t give you these options, there’s certainly another way of doing it. 301 redirects, for instance, can be set up fairly easily, if you have access to your .htaccess file.

I hope this helps for now!

Hello Eoghan!

We recently received an email from Google telling us that we had an increase in “404” pages, and when I logged into our Google Search Console, saw that they are almost entirely links to pharmaceutical drugs. Why would this be happening and what should I do? I’ve changed all passwords in case we were hacked, but didn’t receive any kind of alerts from our WordFence.

Apparently, the source of the link is another link on our website that is also marked as a 404. Really confusing.

Thank you for your help!

Katie H.

Hi Katie,

Sorry about my late reply. I’ve been very busy these last few weeks. The problem you describe could indeed be related to some kind of website hack. If you send me some additional information, I’ll be happy to have a closer look at it to see what it might be.

Best regards,

Eoghan

How should I think about it if URLs keep recurring in this report that were once broken URLs on a site but which have since been removed? In some cases, Google still claims a “linked from” to be pointing at the URL which I’ve verified does not contain it.

This happens a lot. The “linked from” info should not be interpreted as “currently linked from” but rather as “once upon a time linked from (when we first found this URL)”. Google will keep recrawling old broken URLs once in a while. If you really want it to stop, you can redirect the old URLs to matching targets. Otherwise, you can just ignore the crawl errors.

i have some faulty links from other sites which are pointing to my site assets for which i cant even put 301, so i wonder is it safe to redirect all 404 to home page just or its bad

Hello Zahid,

redirecting a bunch of URLs to the home page should always be the last option. Can’t you find better redirect targets, at least for some of the URLs?

Hello, for some reason I got a regular amount of 404 errors through search console, that I took care of, but there are many more with random urls that I’ve never seen before when I check in the Redirection plugin. Do you know what this could mean?

https://ezhangdoor.com

Thanks!

Hi Trey,

Do the URLs only show up in your redirection plugin, or also in GSC?

Very informatif posting sir. I had some problem with my website crawling. Many of my website url suddenly error. The url got messed up. There are two slash. Here some example:

https://modifmotor1.com/cover-bodi-samping-vario-125-techno-lama-warna-lengkap/cover-bodi-samping-vario-125-techno-lama-warna-putih/

https://modifmotor1.com/per-klep-racing-jepang-satria-fu/per-klep-jepang-satria-fu/

https://modifmotor1.com/rumah-roller-racing-honda-vario-110-ktc/pulley-set-roller-racing-honda-vario-110-ktc/

Please help. It made my website many of the post in page 1, gone 🙁

Thank you in advance

Hi Zhoel,

I’m sorry, I’m not sure I understand your problem. Your example URLs are working. Could you send me some additional info, so that I can try to help you?

Hi Eoghan,

I recently redesigned this site on an entirely different server after being hacked (0ld-Prestashop). there were more than 1000 pages and google alerted us to the hack. I fixed the issue and actully found the directory in the hidden files on the server, we no longer have that host. All the files were recreated for the new design (watermarks placed on the artwork) this is a stencil site.

How do i get rid of the 404 errors as a result of the hack? fixed the site within 2 days of the hack but, a month later the 404 errors are still showing.

thanks

Therese

Hi Therese,

I’m sorry to hear your website was hacked, but it’s a good thing you got it fixed so quickly.

Google will probably keep recrawling the fake URLs once in a while, and you have three options for dealing with the 404 errors, none of which is ideal:

1. Just ignore them. They probably won’t do your SEO performance any harm. The downside is that they’ll get in your way in the crawl error reports.

2. Change the status codes for all of the fake URLs to 410. They will keep showing up in the error reports every time they’re crawled, but with a 410 error code there’s a chance that Google will crawl them less regularly and will eventually stop recrawling them.

3. Redirect all of the fake URLs to another URL, e.g. the home page. You normally shouldn’t abuse 301 redirects like this, but I guess that in your case it would be an option to just make the URLs go away from your crawl error reports. You don’t constantly want to be reminded of that time your site got hacked, right?

I hope this helps. Please let me know if you have any further questions.

As you said First, All error mark as fixed. My question is If not found error more the 5000+ how to mark as fixed in once as it only show top 1000 links. Is there any way to mark fixed all 5000+ link at once

I’m sorry, I don’t know of any other way to mark crawl errors as fixed than doing it 1000 by 1000, as soon as the next 100 show up. Even if you use the API and don’t do it manually, you have to wait for the next 1000 errors to show up, as far as I know.

hi Eoghan Henn!

I’m in trouble I receive a message from google console.

” Warnings

URLs not accessible

When we tested a sample of the URLs from your Sitemap, we found that some URLs were not accessible to Googlebot due to an HTTP status error. All accessible URLs will still be submitted.

1

Sitemap: urdutalkshows.org/sitemap_post.xml.gz

HTTP Error: 404

URL: http://urdutalkshows.org/countries-facts/facts-about-Saudi-arabia.html”

How it fix this problem…!

Hi Hafiz,

In order to fix this problem, you should remove the URL with the 404 error status code (http://urdutalkshows.org/countries-facts/facts-about-Saudi-arabia.html) from the sitemap (urdutalkshows.org/sitemap_post.xml.gz). Or, if the URL http://urdutalkshows.org/countries-facts/facts-about-Saudi-arabia.html should be available, make sure it works again and doesn’t give back a 404 error.

I hope this helps!

After reconstructed my website webmaster shows many crawl errors about older URLs..After i mark it as fixed can i redirect it to new URLs ..is Google will consider this redirection?

Hello Afzal,

Google will start processing these redirects as soon as it re-crawls the URLs, which can take a while. The errors should not show up in your reports again.

I have a question regarding the relationship between the graph that is displayed and the number of errors that show below. If I hover on the graph it will show “36 errors” on March 12. If I look in the list of URL’s it will show 5 URL’s listed on the same date. Where do the 36 errors come from if only 5 URL’s are listed?

Thanks!

Hi Kara! The number represented in the graph is the total number of crawl errors that Google is currently displaying for your website. If you see a total of 36 errors and 5 error URLs that were discovered on March 12, the number of errors displayed in the graph for March 11 should be 31. If it’s higher, that’s because some errors were removed the same day (meaning that Google re-crawled previous error URLs on March 12 and found that they were no longer returning errors). I hope this helps! Please let me know if you have any further questions.

I got the problem on my voxya website. When I added SSL on the website then webmaster start showing server connectivity (Connect timeout, Connection refused, No response) and robots.txt (Unreachable) fetch problem. Please help me how I can resolve this problem.

Hello Akriti,

Sorry to hear about your problems! Did you make sure all of your http URLs now redirect correctly to their https equivalents? Did you set up a new Google Search Console property for your https version?

Feel free to send me some more information and I’ll be happy to help.

Hello,

My website getting 503 errors in WMT all those not found pages redirected to main page that is how my script designed. So please tell me what should i do to 503 errors? should i “fetch as” and point them all to my main page?

thank you

Hi Anthony,

503 errors are often caused by a temporary problem (like server overload). You should check if the pages GSC shows 503 errors for are constantly unavailable or if it really just is something temporary. If it’s a temporary problem, you don’t really have to do anything to the URLs, except for making sure the problem doesn’t return. Maybe you need a better performing server?

The other thing you mention, with all 404 error pages automatically being redirected to the home page, is something you might want to review. The home page is not always the best redirect target for URLs that no longer exist.

I hope this helps!

hi, i could fixed all my errors in google search console.

thank you.

Happy to hear that! Thanks for your comment.

Hi i have a couple of errors which i cant figure out how to correct.

Access Denied

https://cassies.com.au/feed/?attachment_id=3380

404 error not sure what to do.

sitemap-tags.xml

Thanks

Danny

Hi Daniel,

Here’s what you can do:

– Find out where the URLs are linked form and remove the links.

– If possible, redirect the URLs to a fitting target.

– Mark the errors as fixed in Google Search Console.

I hope this helps!

how do i have get rid of crawl errors on google web master. All the crawl errors are for pages that are deleted because products are discontinued.

Hi Olive,

When you delete pages because products are no longer available, you have many options, three of which are:

1. Simply return 404 errors for the deleted URLs, as you’re doing right now. This will cause 404 errors in GSC and probably also for some users, but it won’t do too much harm, so it’s OK if you don’t have the resources to set up another solution.

2. Find a matching product that you can 301 redirect the old URL to. If you choose this option, you should make sure that the product you redirect the old URL to is a good and relevant replacement for the discontinued product.

3. Give back a 410 error instead of a 404 error for discontinued products. This is a stronger signal that the URL has been removed intentionally and might lead to Google trying to re-crawl it less (although this is not guaranteed). In your crawl error reports, URLs with a 410 error code will still show up as 404 errors.

I hope this helps! Please let me know if you have any additional questions.

Update: 410 errors now show up as 410 errors in Google Search Console, and are no longer marked as 404 errors.

hello, Eoghan Henn nice info.

what exactly I get from google webmaster

1 server error and 22 not found for desktop

0 server error and 2 not found for smartphone

so can I fix that server error?

thanks

Hi Bablu,

First, you should check if the server error is still happening – often they are just temporary. If it still happening, check if the URL should really be working. If not, make sure you don’t link to it and redirect it, if you can. If it should be working, try to find out why it isn’t and fix it.

I can’t help you much without additional information, but feel free to share more details (via e-mail if you like).

Those 22 link on google webmaster whenever I click on them they all redirect to 404 not found. A couple of day ago i edit all of these link to make better my seo using Yoast . isn’t that is result of that. and also server 1 error still appears.

Hello Bablu,

It’s good that you have already identified the links pointing to the error URLs and changed them. Now, if possible, you should redirect the error URLs to matching targets (there are also WordPress plugins for this). Then you can mark the errors as fixed in Google Search Console and they should not come back.

For the remaining server error, you can do the same.

I hope this helps!

Hello Eoghan,

can You solve this problem? Not followed link in google search console.

this link made by feed burner.

there is an example:

2012/05/mark-twain-all-bangla-onobad-e-book-%E0%A6%AC%E0%A6%BE%E0%A6%82%E0%A6%B2%E0%A6%BE-%E0%A6%85%E0%A6%A8%E0%A7%81%E0%A6%AC%E0%A6%BE%E0%A6%A6-%E0%A6%87-%E0%A6%AC%E0%A7%81%E0%A6%95-%E0%A6%AE%E0%A6%BE/feed/

Hi Anick,

I believe that these URLs are generated in a link element in your head section.

If you go to the source code of the page https://allbanglaboi.com/2012/05/mark-twain-all-bangla-onobad-e-book-বাংলা-অনুবাদ-ই-বুক-মা/, you will find this element in line 48:

<link rel=”alternate” type=”application/rss+xml” title=”Allbanglaboi – Free Bangla Pdf Book, Bangla Book pdf, Free Bengali Books » Mark Twain All : Bangla Onobad E-Book ( বাংলা অনুবাদ ই বুক : মার্ক টয়েন এর গল্প সমগ্র ) Comments Feed” href=”https://allbanglaboi.com/2012/05/mark-twain-all-bangla-onobad-e-book-%e0%a6%ac%e0%a6%be%e0%a6%82%e0%a6%b2%e0%a6%be-%e0%a6%85%e0%a6%a8%e0%a7%81%e0%a6%ac%e0%a6%be%e0%a6%a6-%e0%a6%87-%e0%a6%ac%e0%a7%81%e0%a6%95-%e0%a6%ae%e0%a6%be/feed/” />

The URL that is linked in the href attribute gives back a redirect that doesn’t resolve properly, that’s where the “not followed” error comes from.

I hope this helps! Please let me know if you have any further questions.

Thanks Eoghan for the reply. but I can’t solve this problem. in google search shows 516 Not followed link on my site.

Hi Anick,

I’m sorry my reply didn’t help you. Maybe you know a web developer you could ask how to remove the element that is causing the errors from your source code?

We fixed thousands of 404 errors with redirection, but just out of curiosity, is it necessary to mark the URLs fixed in GWT or will the Crawl Error report eventually be updated automatically?

Hi Carey,

As far as I know, the crawl error report is updated every time Google attempts to re-crawl an URL that previously had an error, if the error is gone by then.

Redirected URLs should disappear from the crawl error reports over time.

i have marked it as fixed but still its showing on. and i dont want to redirect it.

I have also deleted from my website, now what should i do ?

Hi Ankush,

If you mark an error as fixed and it shows up again, that means that Google tried to access the URL again. You can ignore this, if you like, which shouldn’t cause to many problems in most cases. You can also change the status code of the URL to 410 and hope that this helps Google understand that the URL is not coming back and that they try to access it less often.

I hope this helps! Please let me know if you have any further questions.

I have these 5 errors come up in the last few days and my site has been fine and I havent done anything to it.

The first 4 say not found url points to a non existent page, the last one says other – google was unable to crawl this url due to an undertimined issue

Any help correcting it would be much appreciated

1

fullscreen-page/comp-jbuvb96h/779897c6-9f10-4634-a89c-088088fda3b4/32/%3Fi%3D32%26p%3De6zct%26s%3Dstyle-jbvcqto6

404

1/18/18

2

fullscreen-page/comp-jbuvb96h/097cd7ba-d2dd-4f27-b842-41fb9e4bce71/10/%3Fi%3D10%26p%3De6zct%26s%3Dstyle-jbvcqto6

404

1/17/18

3

fullscreen-page/comp-jbuvb96h/51ce979e-13b2-4583-9922-0669fdf3009d/4/%3Fi%3D4%26p%3De6zct%26s%3Dstyle-jbvcqto6

404

1/17/18

4

fullscreen-page/comp-jbuvb96h/c67a24e8-5266-4d0f-9da7-dbf04366a86e/21/%3Fi%3D21%26p%3De6zct%26s%3Dstyle-jbvcqto6

404

1/17/18

1

_api/common-services/notification/invoke

405

1/20/18

Hi George,

Thank you for your comment. I had a quick look at your website and I couldn’t find out where these URLs are being generated.

If you do a site search for your website, you will see that there are lots of URLs in Google’s index that you don’t really want there (including URLs like the examples you provided):

site:georgecoullpaintinganddecorating.co.uk

Your website only has one URL that you need crawled and indexed, so it might be a solution to limit crawling to only this URL via your robots.txt file (without blocking resources that are needed to render the page).

I hope this points you in the right direction. Please let me know if you have any further questions.

Hi George! Did you find the solution for your errors? I am also getting the same errors. Please help. I guess it has something to do with Wix

There are many products on my site, many products are no longer for sale,, and we have directly deleted the products that are no longer being sold. As a result, there are many 404 pages((more than 1,000) in Google Search Console. Recently, Google rankings are dropped rapidly. How should I handle this?

301 redirected to the home page? Or blocked resources by robots.txt?

Thanks!

Hi Kingbig,

Thanks a lot for your comment. First of all: If you lost rankings, this is probably not due to the 404 errors in your reports. 404 errors do not directly cause ranking losses. What might have happened is that the pages you deleted were ranking before – hence the ranking losses after deleting them.

Here are some things you can do:

If you really have to delete a page, redirect the URL to a similar target, NOT to the home page. A similar target in your case might be a newer version of a discontinued product.

Think about whether it always makes sense to delete pages of products that are no longer sold. If they can’t provide any value any longer – sure, delete them. But maybe some of your products turn into collector’s items once they’re sold out? If people are still searching for them, try to provide them with info about the product that they might be interested in. This is good branding for you and next time they might buy from you. In general: if you have a page for something that people are searching for, do your best to give them what they need.

Also, try to find out what exactly caused your ranking losses: Which pages and keywords dropped? Is this really related to the pages you deleted or did something else happen?

In hope this helps for now! Please let me know if you have any further questions.

Hello

I get some 404 errors everyday from “Google Webmaster Tools”, whereas these 404 pages (that Google says) have deleted

for a long time and I have cleaned these old URLs several times by “remove urls” tools either.

I didn’t find any way exept “301 redirect” and i used wordpress plugin to do that. the plugin solved my problem, but reduces

my site speed, so that my site couldn’t load at all.

I did “redirect” technic by htaccess file (without any plugin) but i have this problem (reducing page speed) again .. !

I would appreciate it if help me fast, because i can’t find another way to solve and this issue is important for me ..

Thanks a lot ..

Please note that marking errors as fixed doesn’t change anything for Google: They will continue crawling the error URLs once in a while to check if they’re still not working.

301 redirects are a good way to get rid of errors, as long as you find valid redirect targets.

Using a .htaccess file sounds like the right option. How many rules did you add to it? It would have to be extremely big to slow down your website. Improving your servers’ performance might be the right way to go.

I hope this helps!

There is a page on our corporate website called /Folsom

But I’ve noticed that in WMT a series of randomly appearing 404 pages, such as:

Folsom/UaURY/

Folsom/LMZVM/

Folsom/mTfQh/

Folsom/hTWSZ/

There are dozens of these, and I have no idea where they are coming from. It makes me wonder if Googlebot is trying to crawl one of the ASP.NET form tags but that wouldn’t make sense.

If there is some easy way to prevent these let me know.

I spoke with our IT department and we were able to track down what these URLs were coming from. An external email program from one of our clients was generating these URLs, that explains why there was no referrer. Thanks

Hi Mike,

Thanks for sharing this interesting case. How did Google end up crawling these URLs?

Best regards,

Eoghan

Hi,

In my search consol showing crawl error 404 and same link delete from my website dashboard ( website builder). I marks as fixed all error but they don’t working or same page error 404 showing.

Hi Lokesh,

Thanks a lot for your comment. Marking an error as fixed just stops the error from showing up in your reports, but it doesn’t actually fix the error. If you want to achieve this, you should also set up a 301 redirect from the URL that is no longer working to a matching target, if there is one.

I hope this helps. Please let me know if you have any further questions.

Nice article.

I have a question though. I have 188 links ending in // and when I want to see where they are linked from the “Linked from” tab dosen’t appear. What does that mean?

Thanks.

Hi Casper,

Thanks a lot for your comment. Google does not always show “linked from” information. This often happens for very old URLs, or sometimes (but probably not in your case) also for URLs that Google accesses although it didn’t find any links pointing to them (for example m.yourdomain.com to check if there is a mobile subdomain).

Can you determine redirect targets for the faulty URLs? If so, I would recommend you set them up and don’t worry too much about how Google came up with the URLs.

I hope this helps. Please let me know if you have any additional questions.

I am Vivek Suthar. when my website was live my website has ‘capital letter’ links and google index all links, but I got the good result. then after I transferred all my website links into ‘small letter’ submit sitemap again, but now a day I lost my traffic but my all keyword position is not changed. plz..give me some suggestion for it.

Hello Vivek,

Thank you very much for your comment.

It seems odd if you lose your traffic, but not your rankings. Maybe one of the data sets you are using is not correct or not up-to-date?

Changing all of your URLs can cause a loss of traffic, especially if you don’t redirect your old URLs to their new equivalents. And even then, you should expect a temporary loss of organic traffic. Did you set up redirects from your old capital letter URLs to your new lower case URLs?

Please let me know if I can do anything for you to solve this.

Hello Eoghan,

Greetings of the day!

I read your article. I got some help from your article but I am stuck somewhere and need your help. My website has desktop and mobile site. I checked in webmaster crawl error menu that some desktop url have 404 error which I will have solved with the help of the team but when I go to the smartphone urls then its showing faulty redirect. 62 links showing faulty redirect on smart phone url. Please help how I can resolve these faulty redirect which is showing in the smartphones. Please help.

Regards,

Ankit

Hello Ankit,

Thanks a lot for your interesting question.

The “faulty redirects” error often occurs if redirects for mobile users don’t send mobile users to the exact equivalent of the desktop URL they requested on a mobile device, but to the home page of the mobile version instead. Is this the case on your website?

More info from Google here: Faulty redirects.

If you like, you can share more info with me and I will have a closer look at your problem.

Hi Eoghan,

Thanks for helping me. In future, if I get any problem I will surely message you.

Thanks

Dear sir,

How to remove a crawler errors in webmaster tools. Please info. me point to point.

Following errors in my site.

Current Status

Crawl Errors

Site Errors

DNS Server connectivity Robots.txt fetch

URL Errors

2 Soft 404

3 Not found

Hi Thalib,

Your low numbers of crawl errors are really nothing to worry about. If you still want to fix them, these two sections of the post should help you:

https://www.rebelytics.com/crawl-errors-google-search-console/#what-are-soft-404-errors

https://www.rebelytics.com/crawl-errors-google-search-console/#the-classic-404-crawl-error

If anything remains unclear, please let me know. I’ll be happy to advise further.

Thanks for this article. We did a relaunch of a quite big page a few months ago. We could decrease the 404 from about 110’000 pages to about 50’000 pages. All the important pages have been redirected, so the 50k missing ones at the moment are just “collateral damage” but they keep beeing stuck in the Google Index. Therefore I would like to forward them all to the home page. Do you know how I can download more than 1000 pages? Thanks

Hi Simon,

Thanks a lot for your comment. Unfortunately, I believe that you cannot export more than 1000 errors at a time. This limit seems to hold, even when using the API.

Are the 50000 pages that are left actually indexed (as in: they show up in Google’s search results when you perform a site search for your domain), or do they just keep showing up in the error reports in Google Search Console?

I would generally advise against just redirecting such a big number of URLs to the home page. This wouldn’t really add any value to the situation. Better options would be finding matching redirect targets, or changing the status code to 410, to signal that the pages have been removed intentionally.

Please let me know if you have any further questions.

hello,

my site had a lot of 404 error, and i fix them by google webmaster. but some of them repeat everyday yet, what should I do?

( they are the links that i received from other sites or blogs and these URLs are changed now)

please help me.

thank you.

Hi Leila,

Just marking errors as fixed in Google’s Webmaster Tools doesn’t really fix errors. If they show up again the next day (or later), this means that Google has tried to access the URLs again.

If you received links from other websites to URLs that do not exist any longer, it is very important that you set up 301 redirects from the error URLs to pages that work and that have similar content. This way, you do not only prevent 404 errors from showing up in Google Search Console, but you also send users that click on the links to the right target and direct the relevance that these links pass to your website to important pages, instead of losing it to an error page.

Please let me know if you need any further guidance with this issue.

Hy sir!

I really Like Your Post and read it from start to end…

now, the question is that “How we completely remove the crawl errors 404 “not found” and delete disappear that posts which i have already removed and that create the 404 not found error …

i want to make my site error free totally…

please help!

i will extremely wait for your response…..

Thanks is advance

Hello Alex,

Thank you for your comment. I’m sorry you had to wait so long for my reply.

There are very few sites that are completely error free, but it’s a good goal!

If you want to make all 404 errors disappear, you will have to set up 301 redirects for all error URLs to working targets. But please make sure that the targets match the content of the original URLs! It does not have to be exactly the same content, but a good equivalent. It would not be a good idea to set up redirects just to make the errors disappear if you do not have matching targets that you can redirect the URLs to.

I hope this helps! Please let me know if you have any further questions.

hi Eoghan Henn, please provide details about blocked resources by robots.txt, how to solve these errors.

thank you!!

Hello Agon,