Website migrations, redesigns and relaunches can be risky – from losing ranks to entire parts of your website disappearing. But! We are here to help you plan and minimize the risks! If you prepare and plan your redesign or migration with care, chances are good that you will not harm your SEO performance during or after migrating your website.

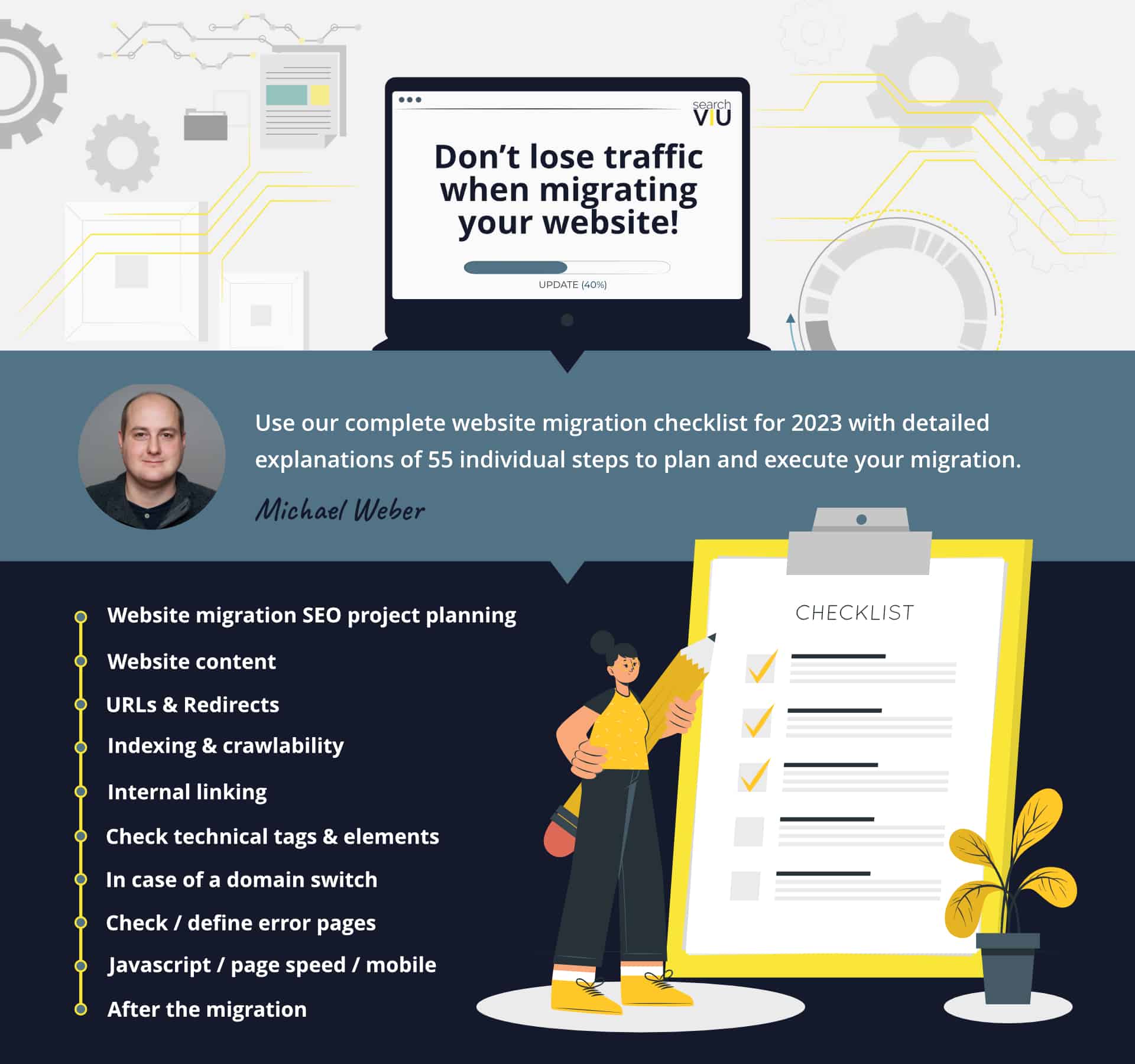

Here we have put together our ultimate website migration SEO checklist for 2023. If you manage to tick off all of the boxes on this checklist, you have a good chance of executing your redesign or migration with minimal risk. You can find a detailed description of each item on this website relaunch SEO checklist by clicking on it. Here you will also find a complete migration guide including the checklist as a PDF.

Website relaunch SEO project planning

Website content

URLs & Redirects

Indexing & crawlability

Internal linking

Check technical tags & elements

In case of a domain switch

Check / define error pages

Javascript / page speed / mobile

After the migration

Website relaunch SEO project planning

Awareness for website relaunch SEO within your organization has been raised.

One of the most important steps for the SEO success of a website relaunch is raising awareness within your organization for the importance of SEO for a website relaunch project. If your colleagues or bosses don’t know about the harm a website relaunch can cause to your SEO performance or if they don’t understand what needs to be done in order to manage a website relaunch without losing organic search traffic and revenue, you will probably have a hard time getting the resources and budget you need to make this project a success.

If you need support with raising awareness within your organization for the importance of SEO for a website relaunch, this article is for you:

How to make SEO a priority within your website relaunch project

Resources for troubleshooting after the relaunch have been allocated.

No matter how well you plan your website relaunch from an SEO perspective, something almost always goes wrong. Make sure you have SEO and IT resources blocked for troubleshooting after the relaunch.

The team involved in the website relaunch project certainly deserves a decent holiday once the project is finished, but it would be best to wait a couple of weeks after the official launch date, to make sure that all problems that arise after the relaunch can be taken care of.

At the end of this checklist, you will find a number of items you will have to take care of after the actual relaunch. In most cases, you will find mistakes and problems that you will have to take care of immediately. Three or four weeks after the relaunch, you will normally be able to tell whether the relaunch was a success from an SEO perspective, or not.

A project plan has been set up.

SEO can be a lot of fun, especially when you do magic and feel like a wizard. Unfortunately, an important part of SEO success is decent project management (not so much fun). A website relaunch project is normally very complex and involves stakeholders from different departments, teams and external agencies. If you want to make sure that all your SEO items get done with the priority they deserve and in order to properly plan your resources and budgets, you should set up a project plan for the SEO part of the website relaunch project. Use our checklist to plan and execute your website relaunch.

If you don’t want to miss our content and articles in the future, make sure to follow us on Twitter, LinkedIn or Facebook.

Website content

All content that is driving traffic to the current website is available on the new website.

Content that is removed from your website will obviously no longer be able to drive organic search traffic to your website. Check your current content for SEO relevance in your web analytics tool and in Google Search Console or the webmaster tools at Bing, Yandex or others. Pages that are important landing pages for organic search traffic should always be maintained on the new website.

Learn how to identify important content on your old website in this article:

Website relaunch? How to identify important content on your old website

Pages with promising search engine rankings are available on the new website.

On top of checking which content is currently driving traffic to your website, it also makes sense to have a look at content that has promising rankings and might have potential in the future. If you only check your web analytics tool, you might miss content that has decent rankings, but does not rank high enough to drive traffic yet. Additional tools like Google Search Console and specialized ranking tools have more detailed information about pages that have organic search impressions, but not many clicks. These pages should be maintained on the new website and optimized in future, so that they can attract new visitors to your website.

If you ignore these types of pages, you will probably not lose any organic search traffic after the relaunch, but you will kill a lot of potential for future SEO performance improvements.

Our article about how to identify important content on your old website also covers this topic:

Website relaunch? How to identify important content on your old website

All inbound links point to relevant, up-to-date content on the new website.

Inbound links (links from other websites that point to your website, often also referred to as backlinks) are still one of the most important ranking factors for search engines. The page that is linked to from another website benefits most from the inbound link, but other pages on your website that are linked to from this page can also rank better. The relevance of the backlink is passed on from the linked page to pages this page links to.

Therefore, it is important to make sure that all inbound links point to relevant content that is useful to your website visitors and has other positive ranking signals. An inbound link should never point to an error page or to old, out-of-date content. Also, pages that are linked to from other websites should themselves link to other important pages on your website, in order to strengthen those other pages too.

If, for some reason, a URL that has an inbound link pointing to it cannot exist on your new website, you can place the content on another URL and redirect the linked URL to the URL that has the content on it. If the content itself cannot exist on your new website any longer, you should create a new piece of content that satisfies the needs of the users that click on the link and land on your page.

You can use specialized link tools to find all URLs on your website that have inbound links pointing to them and Google Search Console or Google Analytics can also provide some valuable insights on this.

PDFs and images that drive search engine traffic are available on the new website.

Images, PDFs and other file types require special attention, because you might not find any information about them in your web analytics tool or other tools you use regularly. If your website attracts a lot of organic search traffic through images, PDFs, or other file types, make sure to make this content available on your new website.

Pro tip: Think about using your website relaunch as an opportunity to replace PDF files that are attracting organic search traffic with HTML pages that contain the same content. HTML pages have several advantages over PDFs:

- HTML pages can be embedded into your natural website navigation structure, so that users get a chance to discover more of your content. PDFs, on the other hand, are normally dead ends, because they don’t have a navigation menu or other types of outbound links, so all the user can do is hit the back button and return to the search engine results page.

- HTML pages show up in your web analytics tool, while PDFs normally don’t. Traffic to HTML pages can thus be analyzed and the insights can be used for optimizations, while PDFs create black holes.

- HTML pages are a lot more mobile-friendly than PDFs. Who wants to open a PDF on a smartphone?

Learn more about content planning (including how to deal with PDFs, images and other file types) when you relaunch your website in this presentation:

Website relaunch SEO – Planning your website content for a successful relaunch

All important search topics (aka keywords) are targeted on the new website.

When you move all of your important content to your new website, make sure to also pay attention to search topics or keywords that have been driving traffic to your old website. Even if SEO is constantly evolving, it is still important to put the right keywords in the right places on your organic search landing pages: In the title tag, meta description, headlines, internal links pointing to the page, and so on. When you migrate your content to your new website, it might happen that some of the keywords that were in the right places on your old website disappear on the new website. This can do harm to the ranking of some pages on your new website.

Tools like Google Search Console and specialized ranking tools give you plenty of information on the keywords that are currently driving traffic to your website. Make sure that the new versions of the pages that are attracting this traffic are still optimized for the same keywords after the relaunch.

Previous content optimizations have been migrated to the new website.

If the content on your old website has already been optimized for better search engine rankings, these optimizations should be migrated to the new website. Otherwise, you risk losing the traffic that you have gained through these optimizations again.

These optimizations might contain changes of headlines, links from certain words on a page to other pages, use of keywords and related terms in texts, alt tags in images, and many more. It is best to check your history of SEO measures from the last few years and make sure you have a good understanding of what has been optimized in the past before you make changes to your content or delete certain elements from it.

All changes to your website content that might be necessary because of design restrictions or technical and structural changes to your pages should be closely monitored from an SEO perspective.

URLs & Redirects

Unnecessary URL changes have been avoided.

“If it ain’t broke, don’t fix it.”

This saying applies to lots of things, but it is particularly important when it comes to URLs. Try to keep as many of your old URLs as possible on your new website. Only change URLs if you really have to. For example, if all your blog posts have the directory /blog/ in them now, there’s absolutely no need to strip this directory from the URLs on the new website, just because you read that shorter URLs are better for search engine rankings.

In most cases, keeping your old URLs will help you more than making little “improvements” to them. Every time you change a URL, you lose the entire history of the old URL and you can only transfer a part of it to the new URL by setting up a 301 redirect.

Long story short: Keep your old URLs if you can, and only change them if you really have to.

There is exactly one URL per content (no duplicate content).

When creating your new website, pay good attention to your new URL structure. Duplicate content is produced when one page of your website can be reached via different URLs. This can be avoided in most cases by creating a good URL structure and avoiding unnecessary duplication of pages.

If, for some reason, you cannot avoid duplicate content, make sure that you at least take care of the problem by implementing a good canonical tag implementation.

Please note that identical or almost identical variants of a page for different countries or regions are not duplicate content. If, for example, you have a US and a UK version of your website that have almost the same content, but are hosted on different (sub)domains or in different directories, search engines will not consider this as duplicate content. Make sure you help Google and others understand your international website structure better by implementing hreflang correctly.

Each old URL that no longer exists redirects to a correct new URL.

This is probably the single most important SEO item you have to take care of when you relaunch your website. Every URL that no longer exists after your website relaunch has to redirect to a URL that is available in the new website structure.

In an ideal case, there would be a very good equivalent of each old URL on the new website, so that every old URL could redirect to a new page that is very similar to the old page it hosted.

When there is no exact equivalent of the old page on the new website, the redirect should be set to the new URL that best matches the old content. It is not advisable to simply redirect all old pages that don’t have an equivalent on the new website to the home page.

If the redirect destinations you choose continue to satisfy the search intent of the users that landed on the old pages before the relaunch, you have a good chance of keeping most of your rankings after the relaunch.

Redirect chains have been avoided.

All redirects you set up should point directly to their destination and not redirect via an intermediary URL.

Redirect chains often happen when a website relaunch involves a domain change or a switch from http to https. In some cases it might seem easier to redirect all old http URLs to their https equivalents first, and then set up redirects from the https counterparts of the URLs that no longer exist to their new equivalents.

The same method might be tempting when a domain change is involved. Some webmasters redirect old URLs to their counterparts on the new domain first, and then implement another set of redirects that takes care of the old URLs on the new domain.

These shortcuts should be avoided by all means. When it comes to redirects for a website relaunch, there is no easy way out. Make sure all old URLs redirect directly to their new equivalents.

Redirects for URLs that have backlinks have been added.

In order to preserve your SEO performance after a website relaunch, you should pay special attention to URLs that have backlinks from other domains. Make sure that none of those backlinks point to error pages after your relaunch.

You can use various tools to compile a full list of your backlinks from other websites and the URLs on your website they point to. If any of the URLs on your website that have backlinks won’t exist any longer after the relaunch, make sure that you set up a redirect to a good destination.

If you can’t find a good equivalent for an old URL with a backlink on your new website, you should probably create a new page that satisfies the needs of the users that click on the link.

Existing redirects have been changed to new targets.

When you relaunch your website and you create new redirects, you probably already have a number of redirects in place. In order to avoid redirect chains, you should change the redirect targets of your old redirects to new destinations. If you just kept the old redirects and then implemented new redirects for some of the targets of these old redirects, you would create redirect chains.

A relaunch is also a good opportunity to get rid of all old redirects that are no longer needed. You can remove all old URLs from your redirect rules that are no longer included in any search engine indexes and that don’t have any links pointing to them. It is also possible to check your log files to find out which old redirects have been accessed by users or bots in the past few months. There is no need to keep old redirects that are no longer being used.

The status code of all permanent redirects is 301.

When setting up redirects, webmasters often don’t pay attention to the status codes of the redirects. Lots of systems set up 302 redirects by default, if not specified otherwise. Although there has been a lot of discussion in the SEO scene about whether 302 redirects do the job just as well as 301 redirects, we still strongly recommend to use 301 status codes for permanent redirects. If you are not planning on changing the redirect target anytime soon, which shouldn’t be the case when you relaunch your website, you should always opt for a 301 redirect.

Off-topic note: There is one situation where a 302 redirect is the better choice for SEO, although it is not really a temporary redirect in this case either. This applies for international websites that redirect their root URL to a home page version in a certain language or country, based on the user’s location or browser language. In this case a 302 redirect should be preferred over a 301, and it is also important to set up hreflang annotations with the value “x-default” for the root URL.

PDFs, images and other file types have been redirected.

A task that is often forgotten when a website is relaunched is the redirection of non-HTML documents. Make sure you also redirect the URLs of all other file types that are driving traffic to your website, that have backlinks or that are indexed by search engines.

It is not really desirable to have PDFs in the indexes of search engines, as PDFs are lousy landing pages for potential clients of your business. As we mentioned above: they are not mobile friendly, they do not allow the user to navigate to other pages on your website, and they are not easily trackable, so you don’t even have good data about their performance. Therefore, it would be best to move the content from PDFs to HTML pages, make the PDFs non-indexable on the new website, and redirect the old PDF URLs to the URLs of the newly created HTML pages.

When redirecting image URLs, it is important to keep in mind that you will only have a good chance of keeping your image rankings if the sizes and file names of the images don’t change. The file name of an image is the part of the URL that comes after the last slash, e.g. “https://storage.googleapis.com/seoviu_wordpress/blog/2017/01/searchVIU-logo-dark.png“.

Indexing & crawlability

All pages that are supposed to be indexed are technically indexable.

When your new website goes live, search engines will notice lots of changes to your URLs and will therefore most likely increase their crawling frequency until they have figured everything out. You want to make sure that all new pages that you want to use as landing pages for search traffic are indexable for search engines. If, for some reason, you have been using “noindex” tags on your staging website on pages that should not have them, make sure you remove them before the launch.

The best thing you can do in this context is to crawl the entire new website just before and shortly after the launch and look for obstacles that could prevent important pages from being indexed by search engines, such as “noindex” meta tags, password protected areas on your website, internal “nofollow” links, or x-robots-tags in your HTTP headers that do not allow indexation.

The robots.txt file does not include any unnecessary directives.

Content management or shop systems sometimes automatically include unnecessary or even harmful directives in robots.txt files. When you launch your new website, make sure there are no commands in your robots.txt file that could prevent your website content from being crawled and indexed by search engine bots.

A note on the correct use of robots.txt files for SEO: If you want to exclude certain pages from being indexed, you should use “noindex” meta tags on these pages and NOT block the pages via your robots.txt file. Pages that are blocked via your robots.txt file are excluded from crawling, not from indexing. If search engines think that the blocked pages might be relevant because they receive lots of links (internal or external), they will be indexed, even if they can’t be crawled. Also, “noindex” meta tags on pages that can’t be crawled will be ignored by Google, because the bot is not able to interpret something it doesn’t crawl.

Pay attention to your new robots.txt file and make sure it doesn’t harm your crawling and indexing.

All important content is accessible for search engine robots.

When your new website goes live, you want to make sure that your important content is actually accessible for search engine robots or, in other words, crawlable. This does not just apply to entire pages that might not be crawlable due to missing internal links or because they are blocked via the robots.txt file, but also to bits of content on pages that might not be available to search engine bots because they are only rendered after a certain user action that robots don’t perform.

It makes sense to crawl your new website, using technologies that mimic how search engine robots crawl, and search for every bit of content that is currently contributing to your SEO success, to make sure that it is still available and crawlable on your new website.

All important internal links are crawlable.

When you launch your new website, you need to make sure that all important internal links are crawlable for search engine robots. But which internal links are important for your SEO performance?

Important internal links can be links that point from strong pages, like the Home page of a website, or a page with lots of external backlinks, to a page that is an important landing page for organic search traffic. Links in the main navigation or other links that point to a target page from lots of different pages can also be important for the ranking of the target page. Often but not always, important internal links have a keyword in their anchor text that drives organic search traffic to the target page.

Depending on how the internal links on your new website are implemented, search engine bots might not find and follow them when they crawl your pages. For instance, links that are not in the source code of the page when the page is first loaded or completely rendered, but only show up after a user action, like a click on an expand button, will probably never be detected by search engines. Make sure you crawl your new website with a tool that crawls like a search engine bot would, and check if all important internal links are crawlable.

All pages that are not supposed to be indexed are excluded from indexing.

Lots of websites, especially online shops, have a number of URLs that should not be indexed, because they are generated automatically, are duplicates of other pages, or do not deliver any value as landing pages for users that search for products or services in search engines.

This class of pages can contain, but is not limited to, pagination, internal search result pages, faceted navigation pages, filtered or sorted variants of pages.

If your new website has pages of this type, make sure that you exclude them from indexing by using “noindex” meta tags, canonical tags, or the equivalent directives in the http headers of your pages.

Limiting the crawling frequency in the days following the relaunch has been considered.

Under certain circumstances, it might be helpful to limit the crawl rate for search engine bots in the days following the relaunch. In Google Search Console, you can go to the site settings, where you will find the option “Limit Google’s maximum crawl rate”.

If you aren’t too sure about the performance of your server in the days following the relaunch, or if you fear there might be lots of errors you will have to fix after the launch, this option might be interesting for you. Limiting the crawl rate will mean that Google’s crawlers will send less simultaneous requests to your server and wait longer between requests.

As your entire website has to be crawled and re-crawled several times after a website relaunch, in order for Google to process all of the changes you have made, choosing this option will also mean that the processing of your relaunch will probably take longer than if you don’t limit the crawl rate. On the other hand, this option allows you to fix errors you find after the relaunch before Google detects them.

All in all, this option will probably not be needed for most website relaunches and we ask you to use it with caution. In case of doubt, it is always better to let search engines figure out the optimal crawl rate themselves.

Internal linking

All internal links have been changed to new targets.

When you launch your new website, remember to change all of your internal links to new targets, if some or all of your URLs have changed. Internal links to redirects or even error pages are a bad signal for search engines and they also have a negative impact on user experience. Internal links that are included in the content of single pages, and not in navigation elements that exist on all pages, are the ones that are most likely to be forgotten.

The best way of checking if all of your internal links are working properly is crawling your entire website and checking the status codes of the target URLs of your internal links. Links with target URLs that have 3xx, 4xx and 5xx status codes should be changed to correct targets.

Important pages have not lost any internal links.

Sometimes, pages that drive organic search traffic lose a big number of internal links during a relaunch, for example because they are removed from the main navigation. This can lead to a loss in visibility and traffic, as internal links are an important contributor to the overall SEO performance of a single page. Make sure that your SEO landing pages do not lose any important internal links or a big number of internal links.

In order to detect changes like this, you can crawl your old and your new website, compare them, and check if your important SEO landing pages (or their new equivalents) have lost any internal links pointing to them.

Internal link anchor texts containing important keywords have not been removed.

A change of a label in your main navigation can have a huge impact on the rankings of the linked page. Internal link anchor text is an important signal for search engines to help determine what the content of the linked page is about. This not only goes for links in your main navigation, but for all types of internal links. Therefore, you should make sure that you do not remove any important keywords from your internal link anchor texts when relaunching your website.

If you want to detect changes like this, you should crawl your old and new website and compare the internal link anchor texts of all pages that are currently driving organic search traffic and their equivalents on your new website.

Important pages have not been demoted in the navigation hierarchy.

In addition to the number, quality and anchor texts of internal links, another important SEO factor is the navigation depth of a page. The further away a page is located from the home page, or in other words, the more clicks you need to find the shortest way from the home page to a page, the smaller the likelihood of this page achieving good rankings. When you relaunch your website, make sure that all important pages are as close to the home page as possible and that no important pages end up a lot lower in the hierarchy than they were before the relaunch.

You can crawl your old and new website and compare the navigation level of pages that are currently driving organic search traffic with that of their new equivalents.

Check technical tags & elements

hreflang, if needed, has been implemented correctly.

If your website is available in more than one language, or if you have more than one version of your website in the same language, but for users from different parts of the world, you most likely need hreflang annotations on your website. No matter whether hreflang was implemented correctly on your old website or not, make sure there are no mistakes in your hreflang implementation on your new website. Search engines rely on hreflang tags for a better interpretation of international and multilingual website structures. With a correct implementation of hreflang, you help search engines display the right version of your international or multilingual website to users from different countries and those searching in different languages.

After a website relaunch, it is particularly important to provide a good crawlability to search engine robots, as crawling demand will rise, due to the many changes that have been made to the website. Crawling and indexing of international and multilingual websites is challenging for search engine bots, and a good hreflang implementation without unnecessary errors helps.

The new website has correct XML sitemaps.

An XML sitemap does not have an immediate effect on the SEO performance of a website, but it can help search engine crawlers interpret the structure of a website better. Also, it makes it easier for search engine robots to discover new URLs and schedule them for crawling. This is particularly important after a website relaunch, as there is often an entirely new structure with lots of new URLs the search engine bots have to digest. This is why you should make sure that your XML sitemaps are formatted correctly and contain all pages that you want indexed and no pages that you don’t want indexed. URLs that have canonical tags pointing to other URLs, that have “noindex” tags, or that are excluded from crawling via the robots.txt file should not be included in XML sitemaps.

The biggest advantage of having clean and good XML sitemaps is that you can use them in Google Search Console to monitor how well your website is indexed. Google lets you know how many URLs you have submitted in your sitemaps and how many of these URLs are indexed. The closer these two numbers are, and the closer they are to the total number of indexed pages (perform a site:yourdomain.com search in Google to find this number), the better.

Pagination is handled correctly on the new website.

If you want your site to display a subset of results, which can improve your user experience and page performance, there are different UX patterns to choose from. If you decide that pagination for long lists or pages with a lot of content works best for your site, make sure the Google crawler can find all of your content. When search engines run into problems with the interpretation of your paginated content, this might hurt the crawl efficiency on your website. Especially after a relaunch you want your website to be as easily crawlable and indexable for search engines as possible, as the search engine robots will have a lot of work to do in order to understand your new website structure.

Google provides recommendations for choosing the best UX pattern for your site and best practices when implementing pagination in this article: Pagination, incrementalpage loading, and their impact on Google Search.

The new website uses structured data, wherever possible.

If you have been using structured data on your old website, you should migrate at least this structured data to your new website. On top of this, a relaunch is a great opportunity to expand the usage of structured data to all applicable areas of your website.

Structured data helps search engines and other machines better interpret the information you provide on your website. In addition to this, one huge SEO benefit of structured data is that search engines tend to use this information for generating enhanced snippets for your results. This leads to more prominent placements in search results and ultimately to more traffic on your website.

Nowadays, websites should use structured data wherever possible, so make sure your new website does not fall behind.

The new website is served through a secure connection (https).

There is no excuse for not serving your new website through a secure connection. You want your website visitors to know that their data is safe with you. On top of this, https also provides a number of SEO and web analytics benefits for you. If your old website is still running on http and you are planning a relaunch, now is the time to switch.

It might be an option for you to make the switch to https a few weeks before or after the relaunch, in order to minimize the risks associated with URL changes. This decision depends on a number of factors that are individual for each website relaunch. If your relaunch includes a domain switch, you might as well switch to https at the same time, as you are already changing every single URL. If your website relaunch does not entail lots of URL changes, but significant changes to the content or navigation structure of your website, it might be interesting to separate the https switch from the rest of the changes in order to make it easier for search engines to process the different modifications.

All HTTP headers contain correct SEO directives.

Some SEO directives, such as hreflang annotations, canonical tags, or “noindex”, can be implemented in the HTTP header of a document, instead of the source code. This location can often be overlooked, as lots of popular SEO tools do not check HTTP headers. Also, not all SEOs are aware of the fact that it is important to include the contents of HTTP headers in a website audit. It is recommendable to use a crawler that checks HTTP headers on the new website before the relaunch. This way, you can make sure that the SEO directives in the HTTP headers do not cause any conflicts with what you are communicating through the source code or in other locations.

In case of a domain switch

Google / Bing / Yandex Webmaster Tools have been set up correctly for the new domain.

The webmaster tools that the most popular search engines, or the most relevant search engines for your business, provide, are the most important and powerful SEO tools you can ask for. After all, you are receiving valuable information directly from the search engines. When you change domains, make sure that you set up and verify the webmaster tools as soon as possible. It might take a few days until your property shows data and you need to start monitoring errors and developments in the webmaster tools as soon as possible after the website relaunch.

Google / Bing / Yandex have been informed about the domain switch via webmaster tools.

Most search engines allow you to inform them about a domain change via their webmaster tools. This procedure can help speed up the process of replacing all URLs from the old domain with URLs from the new domain in the search engine indexes. It will also help the search engines understand what’s going on when they find plenty of redirects to a new domain all of a sudden.

Google, Bing and Yandex use slightly different terminologies for this: Google has a change of address tool and Yandex helps you with a migrate site tool.

All backlinks have been updated to the new domain / updates have been requested.

When your website relaunch includes a domain switch, it makes sense to change the target of all links to a URL on your new domain. There are plenty of links that you have direct access to, such as links from social media profiles or business directories. For all links that you cannot change yourself, it can be a good idea to get in touch with the website administrators and ask for an update. First of all, it is always good to stay in contact with the people that link to you, as you depend on them as long as links are such an important ranking factor. Also, this is a good opportunity to let more people know about your website.

The old domain and SSL certificate have not been canceled.

When you set up redirects from your old domain to your new domain, these redirects will only work as long as your old domain ist still registered with you and, in case you had https URLs on your old website, as long as there is a valid SSL certificate. You should probably keep the old domain and the old SSL certificate forever, especially if there are still links from other domains pointing to URLs on your old website.

Check / define error pages

A 404 page has been correctly implemented on the new website.

After a relaunch, you can expect a bigger number of 404 errors on your website than usually. Make sure you have a user-friendly 404 page on your website that helps users that land on it find the information they are looking for. Also, you should make sure that the 404 page is implemented correctly from a technical perspective:

- The 404 page should load on the URL that has been requested. Do NOT redirect faulty URLs to a 404 page with a different URL.

- Make sure the 404 page actually returns a 404 status code, and not a different one.

- Additional tip: If you include “404” in the page title of your 404 page, it is very easy to set up a report for tracking 404 errors in Google Analytics.

A custom page for 5xx errors has been implemented.

After a relaunch, you can expect a higher number of server errors than in normal times. For user experience, it is a very good idea to create a custom server error page instead of displaying the standard page your content management or shop system provides.

A custom server error page can also help you track server errors that are experienced by users in your web analytics tool and fix them with a high priority. On top of monitoring server errors this way, you should also keep an eye on the crawl error reports in your favorite search engine’s webmaster tools. For SEO purposes, server errors generated by search engines are an important problem to take care of.

A 503 error code is returned in case the entire website is down.

The HTTP status code 503 means that the service is temporarily unavailable due to maintenance or server overload. Setting up your server so that it returns the correct status code when this actually happens can help make search engines understand what’s going on. A 503 error code is a signal to a search engine bot that the server is experiencing temporary issues and that the crawling attempt should be repeated at a later time.

If you return a different status code, like a 404 or a 500 error, search engine bots might think that there is a permanent problem and demote your pages in their rankings. As you can expect temporary downtime after a website relaunch, this is something you should prevent from happening.

Javascript / page speed / mobile

The page speed metrics of the new website have been checked.

Page speed is one of the most important factors for user experience and SEO success, especially in a mobile-first world. There is a strong correlation between top rankings in organic search results and page speed metrics. And even if you do manage to rank well with a slow page, very few users will have the patience to wait until your page loads on their mobile devices. They will skip back to Google and click on your competitors’ results instead.

Make sure you check the page speed metrics of your new website before the relaunch. Page speed should be a top priority item for you and there is no excuse for having a slow website.

Implementation changes to the mobile website version have been accounted for.

When you audit your new website to find SEO problems, make sure to crawl it with a tool that can mimic the mobile versions of search engine crawlers. Search engines usually crawl your pages with a desktop crawler and a mobile crawler, because they want to figure out how well your website works for desktop users and mobile users. If there are differences in the user experience, your page might rank differently for desktop and mobile searches.

Google has even announced that at some stage in the not so distant future, the ranking of the desktop results will be influenced by the findings of the mobile crawler. This concept has become known as “mobile first indexing”. This is another reason why you should definitely not neglect mobile crawling when you analyze your new website before launching it.

Changes in JavaScript rendering have been accounted for.

As more and more websites are using JavaScript for better user experience and more dynamic features, search engines are getting better at rendering and interpreting websites that use JavaScript. If your old or your new website depends on JavaScript, make sure you compare them both with a crawling tool that renders JavaScript in a way that is similar to how the most important search engines do it.

Let’s say your new website loads an important amount of internal links only after a portion of JavaScript on your pages has been rendered, and these links were available without JavaScript on your old website. In this case, you should make sure that search engines can actually interpret these links on your new website. A tool that renders JavaScript in a way similar to search engine crawlers can help you with this task.

After the migration

The robots.txt file on the new website has been checked.

Once your new website is launched, you want it to be crawled and indexed as quickly as possible. The robots.txt file of your new website might still contain directives from when the page was in the staging environment and you didn’t want it to be crawled yet, so make sure no pages you want crawled are blocked in your robots.txt file. Also, resources that are needed for the complete rendering of your website, such as CSS or JS files, should not be blocked via your robots.txt file.

Important side note: If you have included “noindex” directives on certain pages that you don’t want indexed, make sure NOT to block them via your robots.txt file. It is a very common mistake to set pages to “noindex” and block them via your robots.txt at the same time. The problem with this is that, as you are asking bots not to crawl the pages, they will not read the “noindex” tags and might end up indexing them all the same.

The number of indexed pages is being monitored.

When you relaunch your website, the number of indexed pages will normally change. First, your new URLs will be indexed, while most of your old URLs will remain in the index for a while. This temporary rise of the number of indexed pages is totally normal after a website relaunch, as search engines index new URLs pretty quickly, but hesitate to completely remove a URL that no longer gives back a 200 status.

The important part is that, within a few weeks (or longer, depending on the size of your website), the number of indexed pages gets as close as possible to the number of pages you want indexed. Also, if the number of indexed pages drops after your relaunch, although your new website is not significantly smaller than your old one, you should probably be worried and run some analyses.

Crawl errors in Google Search Console are being monitored and regularly fixed.

It is important to make your new website as easily crawlable as possible, and every crawl error that search engines encounter is an obstacle to this. After a website relaunch, it is totally normal to experience an elevated number of crawl errors in Google’s (or another search engine’s) webmaster tools.

Best practice would be to use a tool that crawls your new website as search engine crawlers would, so that you can detect and fix any errors before search engines run into them. Without such a tool you need to use the crawl error reports to keep track of new errors, in order to fix them as quickly as possible.

Website and server errors experienced by users are being monitored and fixed.

404, 500 and other errors that are actually experienced by users are probably even more urgent to fix than errors encountered by search engine bots, although both can cause your website performance serious harm. Make sure you monitor all errors caused by users in your web analytics tool.

The prerequisites for such a monitoring are:

- You need an error page for every type of error that has the normal website tracking code included on it.

- Error URLs should not redirect to a different URL, but load the error page on the actual URL. This is not just important for tracking, but also for SEO purposes.

- The error pages should have an identifiable page title that you can filter in your web analytics tool.

If these requirements are met (which they should be in any case), you can create two different reports in your web analytics tool. One should include all errors caused by internal links, including the previous page path (the page the false URL is linked from). The other one should include all errors caused by external links, including the full referrer URL (also the page the false URL is linked from, but on a different website). These reports will help you quickly identify broken links and fix them by changing the link and/or redirecting the false URL.

The most important SEO KPIs are being monitored.

All important SEO KPIs that can be updated regularly or in real-time, such as revenue or leads from organic search, should be closely monitored in the days after the relaunch. A drop in SEO performance after a website relaunch does not necessarily have to be related to an SEO issue. Everything might be working out perfectly with your organic traffic, but your revenue might still drop because there is an issue in your checkout funnel.

Make sure you get a clear picture of everything that is happening on your website during the days after the relaunch. You can expect some significant changes to your KPIs when you relaunch your website. Make sure you know what is causing the changes, so that you can react, if needed.

The number of landing pages for organic search traffic is being monitored.

The number of pages that receive organic search traffic is a metric that can be very helpful for detecting ranking or indexing problems. It is a number that is often a lot more stable than traffic, which is also heavily influenced by search volume. It makes sense to monitor this number in your web analytics tool, because a significant change to it is often caused by internal factors instead of external ones.

After a website relaunch, it would be best not to see extreme changes to the number of organic search landing pages. The URLs of your old organic search landing pages should be redirecting to correct new targets, which would not change the total number of pages. New pages might start ranking, but this normally happens with steady, and not sudden, growth.

In this context, you can also create a report that filters all organic search landing pages that have the title of an error page, a problem you should take care of as soon as it occurs.

Search analytics data in Google Search Console is being monitored.

Another important report you should keep a very close eye on is the search analytics report in Google Search Console (or its equivalents in Bing’s and Yandex’s webmaster tools). Although Google doesn’t provide the data in real time, but with a delay of two days, this first hand information about your new website’s organic search performance is crucial.

Look for drops of traffic or impressions for certain pages and keywords. Drops in CTR and average position can also harm you in the long run. If you identify performance problems quickly, you have the chance of reacting and making changes to save your SEO performance.

Old URLs have been crawled to verify that all redirects are working correctly.

Once your new website is online, you should crawl all of your old URLs and make sure that all redirects are working exactly as they are supposed to. Every old URL should either continue returning a working page with a 200 HTTP status, or 301 redirect directly to such a page.

Look for 404 and 5xx errors and redirect chains (several redirects in a row) and fix the problems as soon as possible. When search engine bots and users visit your old URLs, you want them to find the right content, even if the old URL no longer exists.

In order to make sure that your old URLs don’t just redirect, but actually redirect to the right targets, it is advisable to compare the content of the old and new URLs and make sure it matches. This can be a challenging task for a big website, so you should rely on a tool that offers this feature.

Get the PDF version of our website relaunch SEO checklist

We have created a shiny PDF version of this website relaunch SEO checklist for you to save on your device or print off and put on your desk or wall. Get your copy here: